👋 Hi! I’m Bibin Wilson. In each edition, I share practical tips, guides, and the latest trends in DevOps and MLOps to make your day-to-day DevOps tasks more efficient. If someone forwarded this email to you, you can subscribe here to never miss out!

One of the key focus areas for DevOps engineers is System Design.

In this edition, I want to share some essential design concepts related to etcd, a critical component of Kubernetes.

Before etcd became a core part of Kubernetes, I had set up an etcd cluster for service discovery (similar to Consul). Interestingly, etcd was created in 2013, a year before Google launched Kubernetes in 2014.

This edition will help you answer important Kubernetes etcd design questions, such as:

How does Kubernetes store its state in etcd’s key-value storage?

Storage limitations of etcd?

How would you design an etcd cluster to handle the failure of two nodes?

When should you choose an external etcd cluster over a stacked topology?

Lets dive in.

Why etcd?

Kubernetes is a distributed system.

It requires a consistent, reliable, and highly available datastore to store cluster state, configuration, and metadata.

A distributed system like Kubernetes must prevent data inconsistencies, split-brain scenarios etc.

This makes etcd an ideal choice for Kubernetes.

etcd Architecture

etcd is a distributed, strongly consistent key-value store that serves as the single source of truth for Kubernetes. It follows the Raft consensus algorithm to maintain consistency across multiple nodes.

Following are the key components

Leader-Follower Model: The leader node processes all writes, while followers replicate data.

Key-Value Storage: Kubernetes stores its entire state in etcd’s hierarchical key space. The data store is built on top of BboltDB, a fork of BoltDB, known for its high performance and reliability.

Watch Mechanism: Kubernetes continuously watches etcd for changes to apply updates in real-time.

gRPC API: Provides access to store and retrieve cluster state.

etcd Database

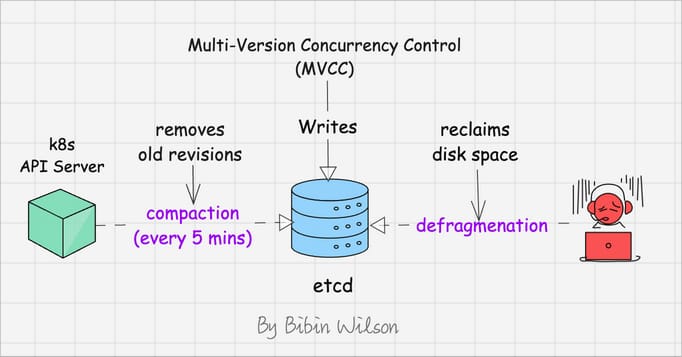

etcd used Multi-Version Concurrency Control (MVCC).

Meaning, every object update creates a new version instead of modifying the existing one.

This append-only model may cause the database to grow over time because historical versions are retained.

Let's say you have an object that takes up xMB of space. When you update it, MVCC creates a new version instead of overwriting.

The old version's space is marked as deleted but not actually freed. Over time, you end up with many small pockets of unused space (Fragmentation)

To prevent the database from growing indefinitely, etcd supports Compaction. It removes old revisions of data that are no longer needed.

The k8s API server does compaction every 5 mins by default (configurable value)

Also, periodic Defragmentation reclaims disk space from deleted versions.

High Availability Architecture

Kubernetes clusters rely on etcd’s high availability (HA) architecture to prevent data loss.

There are two key deployment models for etcd HA.

Stacked etcd: Runs alongside control plane nodes (simpler, but less isolated).

External etcd: Etcd cluster running dedicated nodes. This model has the advantage of well-managed backup and restore options (better resilience and scalability).

Quorum & Fault Tolerance

In etcd, quorum is used to ensure consistency and availability in the face of node failures.

The quorum is calculated as:

quorum = (n / 2) + 1Where n is the total number of nodes in the cluster.

For instance, to tolerate the failure of one node, a minimum of three etcd nodes is required. To withstand two node failures, you would need at least five nodes, and so on.

The number of nodes in an etcd cluster directly affects its fault tolerance. Here's how it breaks down:

3 nodes: Can tolerate 1 node failure (quorum = 2)

5 nodes: Can tolerate 2 node failures (quorum = 3)

7 nodes: Can tolerate 3 node failures (quorum = 4)

And so on. The general formula for the number of node failures a cluster can tolerate is:

fault tolerance = (n - 1) / 2Wrapping Up

In distributed systems, concepts like consistency, quorum, and fault tolerance are fundamental.

Even when setting up MongoDB clusters, you need to design them in a way that ensures node failures don’t compromise data integrity.

As DevOps engineers, these are essential concepts to understand. Usually, you get to apply them when working on real-world projects, especially when setting up systems from scratch.

I hope you found this edition useful!

In tomorrow’s edition, I’ll cover an interesting concept (split brain scenario) that is commonly asked in interviews and one you might encounter when designing clustered systems.

Stay tuned! 🚀