✉️ In Today’s Edition

In today’s edition, I will walk you through how production-grade container workflows are designed end-to-end.

Here is what you will learn.

Container Build and Promotion Pipeline (Crash Course)

Docker Build & Promotion Pipeline with GitHub Actions

ArgoCD Architecture Deep Dive

AWS EBS instance clone vs snapshots

We will also look at,

The rise of Neo Clouds

Spec Driven IaC development.

Tool to reduce LLM cost and latency

Real-world engineering lessons from Spotify, Slack, and Netflix on outages, deployments, and reliability

🔥 Can you fix a broken Linux server?

Sad Servers gives you live DevOps scenarios where you debug real problems like high CPU usage, failing services, and networking issues.

No tutorials. Just real troubleshooting.

🧱 Container Build and Promotion Pipeline (Crash Course)

One question I get asked constantly is How should a container-based workflow be designed end-to-end for production?

So if you are looking for a guide that teaches you production level docker image build workflow for applications, this guide is for you.

In this crash course, you will learn the following.

Deployment environments in real projects

Container registry architecture patterns

Docker image tagging strategy

Git branching strategy for Docker based CI/CD pipelines.

How does image promotion work

How the PR-based image build workflow is structured end-to-end.

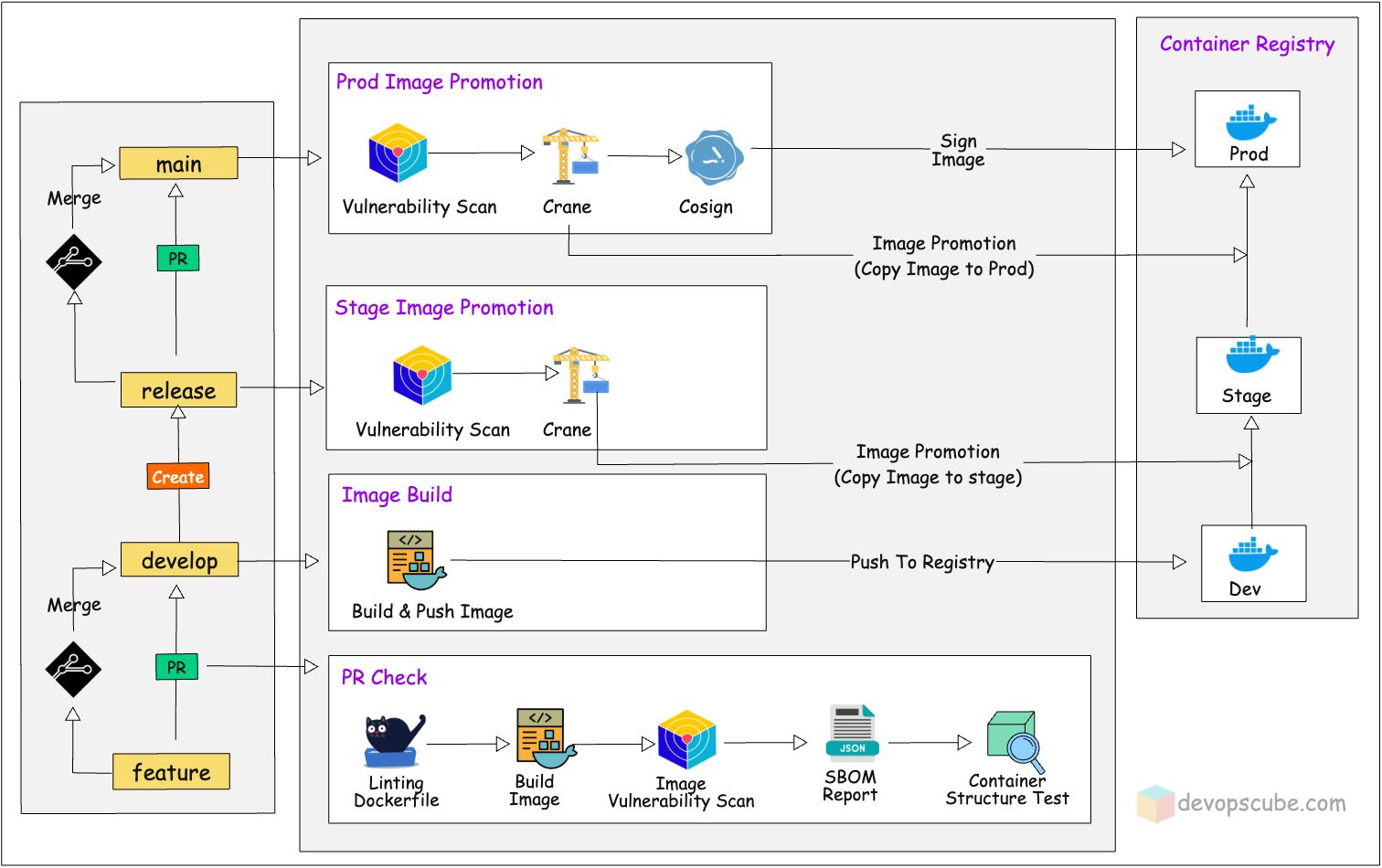

📦 Docker Build & Promotion Pipeline with GitHub Actions

In this hands-on guide, we will walk you through a complete, production-grade Docker image build and promotion pipeline using GitHub Actions just like how it is done in real enterprise environments.

Here is what is covered in this guide.

Automating a Java application build and Dockerization with GitHub Actions

PR base

Promoting images through dev, stage and prod registries

Signing the final container image using Cosign

Build caching, image tagging strategy, registry architecture, and more..

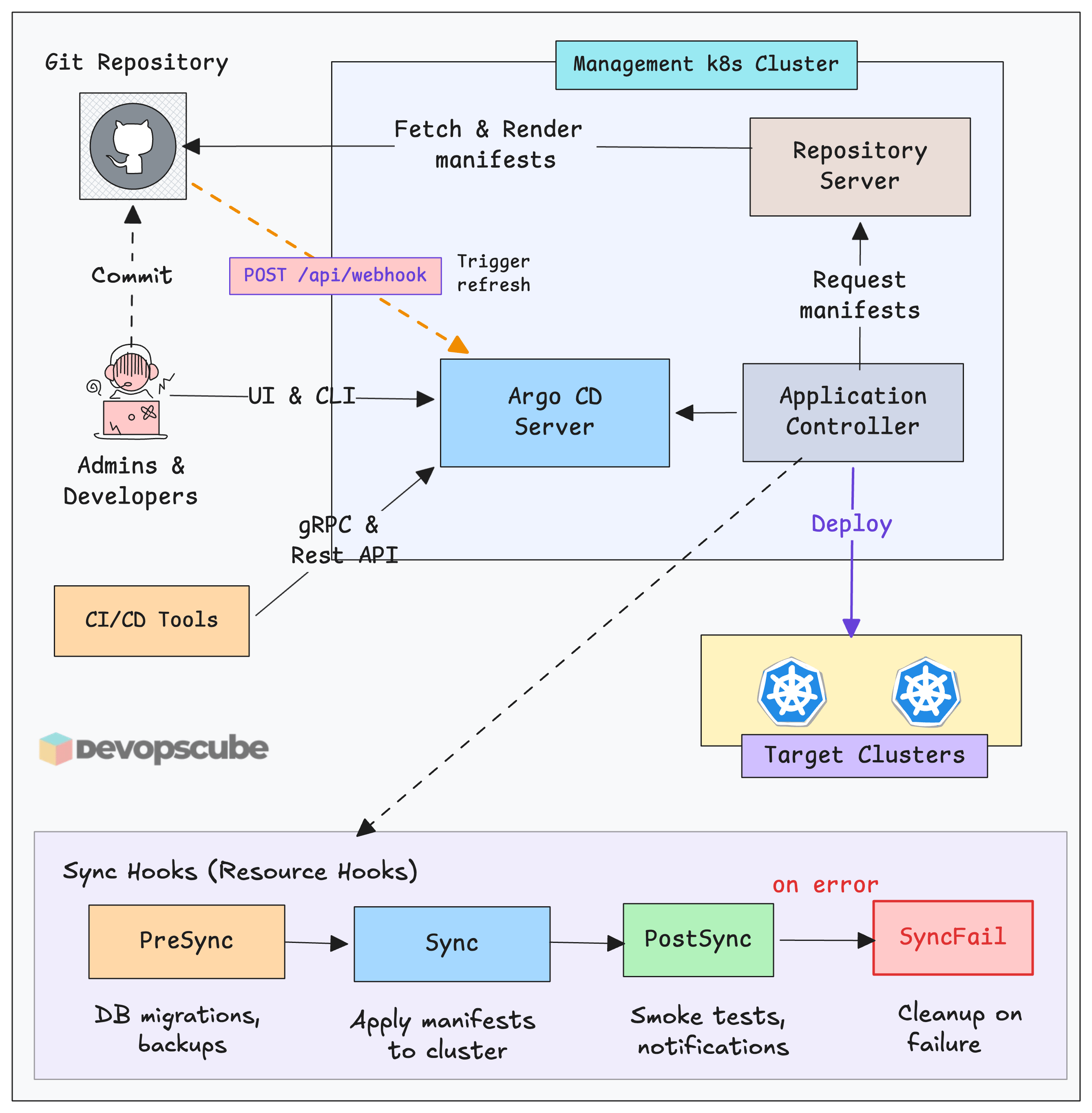

🚀 ArgoCD Detailed Architecture

Argo CD has become a widely used GitOps tool for Kubernetes. But here is the thing. Knowing how to use it and knowing how it works are two very different things.

By the end of this guide, you will have the clear mental model of the following.

Every core component in Argo CD and what it actually does

How components communicate and hand off work to each other

How Argo CD stores data and how to back it up properly

How to run Argo CD in high availability mode

Security features you should know and use

How to monitor Argo CD with Prometheus and Grafana

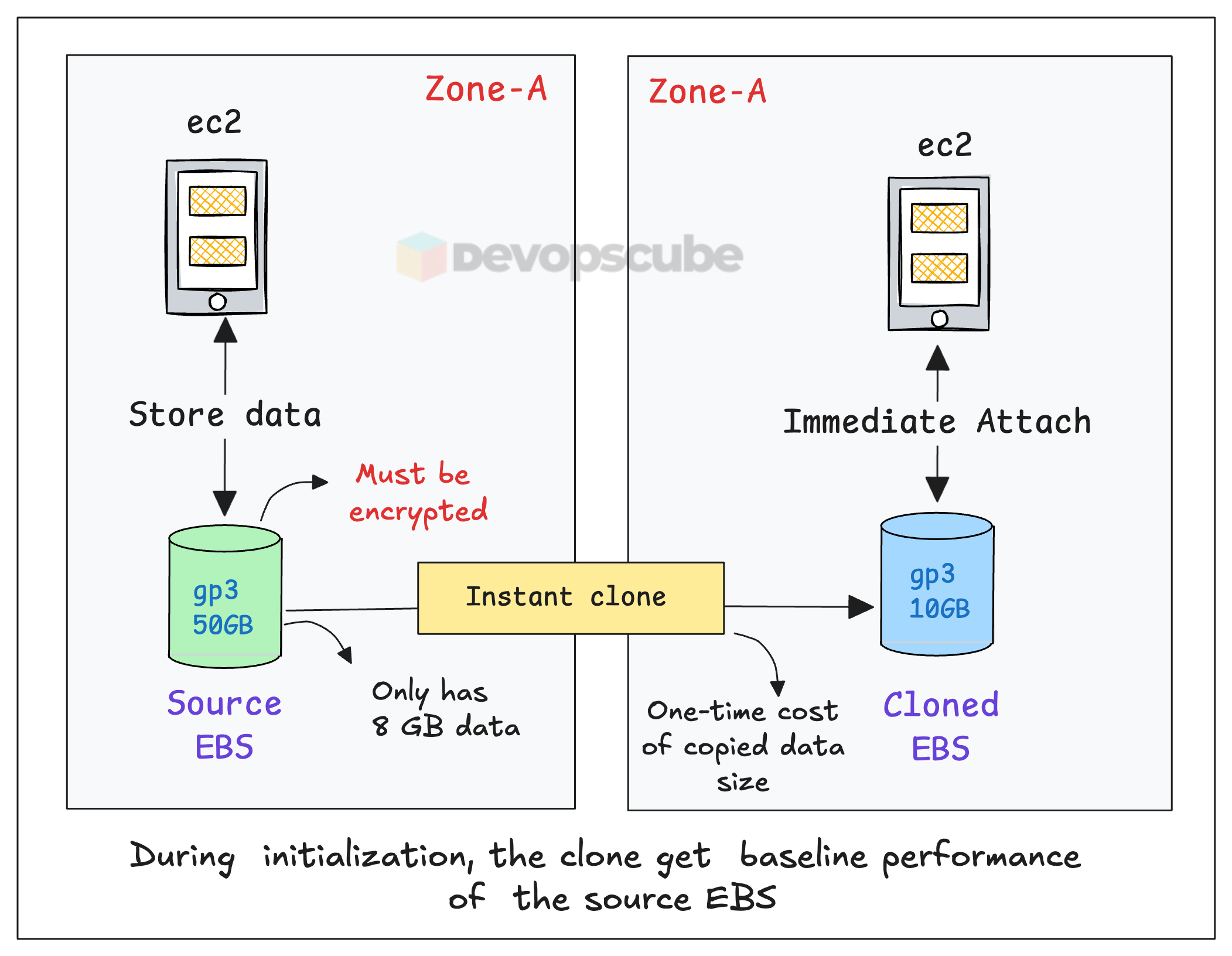

💾 Clone AWS EBS Volumes Instantly

AWS now has an EBS Volume Clone feature that lets you copy an EBS volume instantly with a single API call, and no snapshot is required.

By the end of this guide, you will understand,

What EBS Volume Clones are and how they work

How cloning differs from snapshots (and when to use each)

How to clone an EBS volume using the AWS CLI

Best practices for managing cloned volumes

📦 The Rise of Neo Clouds

A Neo Cloud is a new type of cloud provider built mainly for AI workloads, especially those that need GPUs. You can call it as GPU-as-a-service.

Unlike traditional cloud providers such as AWS, Azure, and Google Cloud, neoclouds concentrate on providing infrastructure specifically for AI, machine learning, and analytics.

Neoclouds currently account for about 17% of AI infrastructure investment, a figure expected to grow to over 30% in the next ten years

Here are the main ones you should know

CoreWeave - One of the biggest GPU clouds (used by OpenAI partners)

Lambda Labs - Very popular for ML engineers

Paperspace (by DigitalOcean) - Developer-friendly GPU cloud

Here is the reason behind the origins of Neo Cloud.

AI boom created massive demand for GPUs. AI models like LLMs require significant compute power, often running on large GPU clusters. As more companies started building AI products, GPU demand increased rapidly. However, supply could not keep up. This gap is what truly accelerated the growth of neoclouds.

📦 Spec Driven Development (SDD)

SDD is a workflow where you write a detailed specification first before generating any code. Instead of prompting AI randomly and iterating blindly, you clearly define what needs to be built and use that as the single source of truth.

Here is the core idea.

AI is very good at execution, but not at deciding what to build. SDD separates these concerns. Humans define the “what”, and AI handles the “how”.

Here is an example workflow.

Write a spec: Define inputs, outputs, behaviors, and edge cases in a clear format (For example claude.md)

Validate the spec: Review it with AI to identify gaps before implementation starts

Generate: Use the spec to produce code, tests, and documentation

Verify against spec: ensure outputs match the specification, not just that the code runs

Update spec first: Any change starts from the spec, not directly in the code

IBM has a project called IaC Spec Kit. It applies SDD to infrastructure (Terraform, cloud infra)

🛠️ Reduce LLM Cost and Latency

One of the key issues with LLM applications is the repeated use of large prompts. The same instructions and context are often sent again and again, which increases token usage, cost, and response time.

rkt addresses this by acting as a high-performance CLI proxy between your application and the LLM. It optimizes requests, removes redundant data, and caches responses, reducing token consumption by up to 60–90%.

🧠 From Engineering Blogs (Real Lessons)

Spotify's Global Outage: A must-read for anyone thinking about change blast radius, Kubernetes memory limits, and why "low-risk" config changes still need staged rollouts

How Slack Cut Deploy-Related Outages by 90%: A practical playbook on building deploy guardrails, safety culture, and cross-team reliability programs at scale.

Slack's Chaos Engineering Playbook: A practical guide to why scheduled chaos beats surprise production failures every time

Netflix Incident Management: It covers how Netflix reframed incidents from "big scary outages" to "any blip worth learning from" and built tooling so approachable that engineers proactively opened incidents instead of avoiding them