👋 Hi! I’m Bibin Wilson. In each edition, I share practical tips, guides, and the latest trends in DevOps and MLOps to make your day-to-day DevOps tasks more efficient. If someone forwarded this email to you, you can subscribe here to never miss out!

In today's edition, I will walk you through GPU scheduling in Kubernetes. I will keep it simple with illustrations so you can understand the fundamentals.

Here is what you will learn.

Why GPUs Need Special Scheduling

How Kubernetes learns about GPUs through the Device Plugin

How to make sure GPU pods land on the right nodes

What the NVIDIA GPU Operator does and what you need to know about it

Testing NVIDIA GPU Operator Deploying an LLM using GPU

Will also look at,

Migrating 1,000+ EKS Clusters to Karpenter

Seamless EKS Upgrades at HackerRank and more..

🎁 For k8s Certification Aspirants

If you are preparing for Kubernetes certifications, this is a good chance to save money. Use code DCUBE30 at kube.promo/devops to get a flat 30% discount on individual certifications.

For 50% bundle discounts, extra coupons, and other offers, check this GitHub repository for the full list.

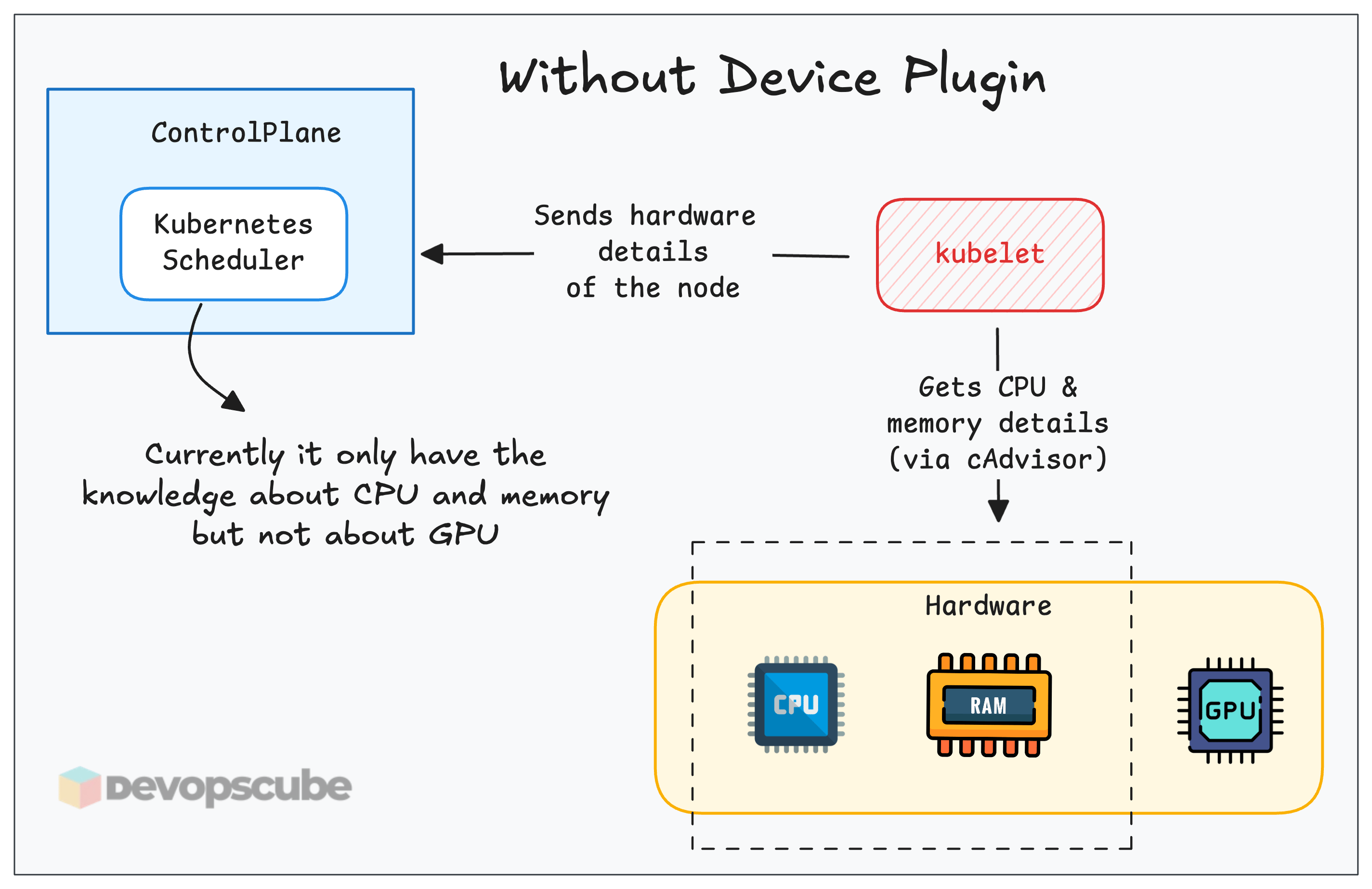

Kubernetes was built for CPUs and memory. The scheduler understands these resources natively through cAdvisor.

But GPUs are different. They are specialized hardware made by vendors like NVIDIA and AMD. Kubernetes has no built-in knowledge of what a GPU is, how much memory it has, or how fast it can compute.

So, even if you deploy an application (eg, LLM) that requires GPU on a Kubernetes cluster, the scheduler has no way to find a node with a GPU and assign it to your pod.

This is where the Device Plugin framework comes in.

Device Plugins

By default, Kubernetes has no idea what a GPU is. It only understands resources like CPU and memory. To make Kubernetes aware of GPUs, you need the Device Plugin framework.

It is basically a set of APIs that allows third-party hardware vendors like NVIDIA, AMD to create plugins that advertise specialized hardware (like GPUs or other accelerators) to the Kubernetes scheduler.

The following diagram illustrates what happens when you install a Device Plugin on a GPU Node.

Here is how it works.

Device plugins run on specific GPU nodes as DaemonSets. They register with the kubelet and communicate via gRPC.

They let nodes show their GPU hardware, like NVIDIA or AMD, to the kubelet.

The kubelet shares this information with the API server, so the scheduler knows which nodes have GPUs.

Scheduling Pods With GPU

Once the device plugin is set up, you can request a GPU in your Pod spec, like this

resources:

limits:

nvidia.com/gpu: 1Once you deploy the pod spec, the scheduler sees your GPU request and finds a node with available NVIDIA GPUs. The pod gets scheduled to that node.

Once scheduled, the kubelet invokes the device plugin's Allocate() method to reserve a specific GPU. The plugin then provides the necessary details like the GPU device ID. Using this information, the kubelet launches your container with the appropriate GPU configurations.

The following image illustrates the detailed flow of an NVIDIA device plugin.

Keeping GPU Nodes Clean

Here is a mistake that happens more often than you think.

In mixed clusters (GPU + non-GPU nodes), you must prevent non-GPU workloads from consuming GPU node resources

If not, a random deployment is scheduled on the GPU node because the scheduler sees free capacity, which makes the GPU unavailable for the workload that needs a GPU.

To stop this from happening, you use taints and tolerations alongside node affinity or node selector.

Here is how to use taints, tolerations, and node affinity.

Taint the GPU node with something like

gpu=true:NoSchedule, so any pod without a matching toleration gets blocked.Then add a toleration to your GPU pods to tell Kubernetes the pod is allowed to deploy on the tainted GPU nodes.

Also, add a node affinity or a nodeSelector to deploy the GPU workload in the exact nodes with labels like

node-type=gpurather than just letting it schedule anywhere.

Note: Using both taints and node affinity together is a best practise in production. One blocks unwanted pods, the other helps to schedules the pods in the right GPU node.

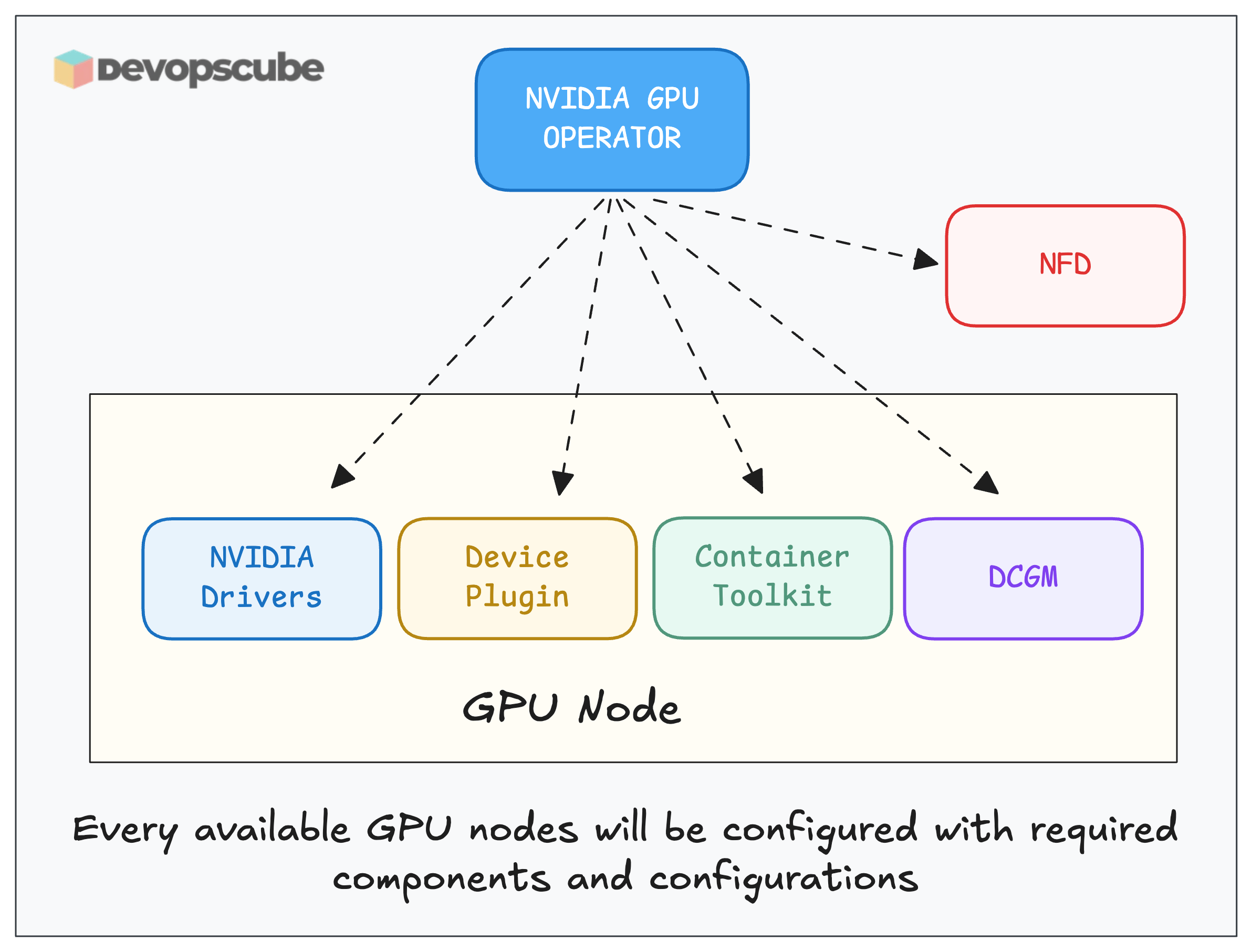

The GPU Operator

Setting up GPU support manually means installing drivers, the device plugin, a container runtime hook, metrics exporters, and doing it all over again each time you add a new node.

It gets complicated when more GPU nodes are added.

The GPU Operator is a Kubernetes operator that handles the full GPU software stack for you. One Helm install, and it takes care of everything, including new nodes that join the cluster later.

Important Note: There is no standard operator for GPU's. You have to choose the operator based on the hardware you are using. For example, if the GPU you are using is NVIDIA, you have to install the NVIDIA GPU Operator (one we are using in this guide).

The following image shows the services deployed by the NVIDIA GPU operator on every GPU node.

Here is what each component does.

NVIDIA Drivers Installs and manages the NVIDIA kernel drivers on the host node.

The Kubernetes device plugin that registers GPU resources (

nvidia.com/gpu) with kubelet.Container Toolkit configures the container runtime (containerd/CRI-O) to give containers access to GPU devices.

DCGM (Data Center GPU Manager) exposes deep GPU metrics like utilization, memory, temperature, ECC errors, etc. It is typically scraped by Prometheus via the DCGM Exporter for observability.

NFD (Node Feature Discovery) detects GPU hardware presence and labels the nodes. The GPU Operator uses these labels to know which nodes have GPUs. (Refer the NFD newsletter edition to know more)

Note: In managed services like EKS, Digital Ocean k8s cluster or AKS comes with pre-built GPU drivers.

Hands on GPU Node Setup & Testing

If you have access to GPUs locally or on a cloud platform , you can deploy a Kubernetes cluster with GPU nodes and test it by running an ML workload that requires a GPU.

Important Note: Even if you do not have access to a GPU, you can still go through the document to understand how it works in practice.

We have documented a blog that shows how to get started with GPUs on Kubernetes.

It covers,

Setting up the NVIDIA GPU Operator on a Kubernetes cluster

Verifying that Kubernetes detects the GPUs

Deploying a real GPU-based workload to validate the full stack

Whats Next?

Getting GPUs working is step one.

A big challenge in production is GPU waste. For example, an inference job may only need 2–4 GB GPU memory, but Kubernetes allocates the entire GPU by default.

To solve this, there are different strategies in managing GPUs in Kubernetes for the efficient use of GPU resources. For example, GPU Time-Slicing, Multi instance GPU (MIG) etc..

Also, if GPU utilization is consistently below 50% on an allocated GPU, you are leaving money on the table. That is where sharing strategies helps you.

I will cover those strategies in the upcoming editions.

𝗡𝗼𝘁𝗲: Kubernetes 1.32 introduced Dynamic Resource Allocation (DRA) as the next evolution in hardware resource management.

It provides fine-grained, flexible resource allocation that device plugins cannot handle.

However, device plugins are still widely used in production today, and both models currently coexist.

🧱 Tool Spotlight: llm-checker

Not every LLM model will run well on every machine. The performance depends on your GPU, available memory, and the backend being used (Apple Metal, NVIDIA CUDA, AMD ROCm, etc.).

This is where llm-checker helps. It is a simple CLI tool that scans your system hardware and tells you which models from the Ollama library your machine can realistically run.

So instead of downloading large models blindly and hoping they work, you can quickly check which ones are actually compatible with your setup.

📦 From Engineering Blogs (Real Lessons)

Learn from what real engineering teams shipped, broke, and fixed so you dont have to learn everything the hard way.

Migratring 1,000+ EKS Clusters from Cluster Autoscaler to Karpenter: It convers how salesforce built custom tooling to handle 1,180 node pools, cut operational overhead by 80%, and reduced scaling latency from minutes to seconds without disrupting production workloads

Seamless EKS Upgrades at HackerRank: How HackerRank manages EKS upgrades across 14 clusters without downtime including a custom Bash automation that cut upgrade time from 4 weeks to 2, and how they communicate changes across engineering teams before every production window

Cloudflare's November 2025 Outage Postmortem: A database permissions change doubled a Bot Management config file, cascaded into a global 2-hour outage affecting X, ChatGPT, and Shopify. A must-read for anyone thinking about config deployment safety and blast radius at scale.

Fail Small: Cloudflare's Resilience Plan: After two major outages in three weeks, Cloudflare declared a company-wide Code Orange. Their plan to prevent global config changes from taking down the network is a practical lesson in progressive deployments and health-mediated rollouts

🧰 Remote Job Opportunities

DigiBoxx Technologies - SRE-2 (DevOps Engineer) (5-9 Yrs)

Smart Working - DevOps Engineer (5 Yrs)

Cision - Senior DevOps Engineer/SRE (5+ Yrs)

Eltropy - DevOps Engineer (4+ Yrs)

Hireologist - Senior DevOps Engineer (AWS) (6+ Yrs)

Maitsys - DevOps Engineer (6+ Yrs)

Portainer.io - Senior DevOps Engineer (Kubernetes)(6+ Yrs)

Harris - Sr. Site Reliability Engineer (5+ Yrs)

Delphic Global - SRE(DevOps/Cloud Engineer) (5+ Yrs)

Salt - DevOps Engineer (4-6+ Yrs)