✉️ In Today’s MLOps Edition

Here is what we will cover today.

Airflow and DAGs explained for DevOps engineers

How Airflow, DVC, EKS, and S3 work together

Inside a real DVC pipeline DAG

Deploying the pipeline on Airflow (EKS)

Verifying data versioning

By the end, you will see how real teams automate dataset versioning.

📦 MLOps Code Repository

All hands-on code for the entire MLOps series is being pushed to the DevOps to MLOps GitHub repository. We will refer to the code in this repository in every edition.

Fork the repository to follow along. As new updates are added, regularly pull changes from the upstream repo to keep your fork in sync. Check out this guide to learn how to keep your fork updated.

In the last edition, we manually versioned data using dvc add and dvc push to understand how DVC works.

In real setups, ETL pipelines run on a schedule, and data versioning happens automatically with no manual steps. In this edition, we integrate DVC into an Airflow DAG on Kubernetes, just like in a real project setup.

Note: This is a deep dive with hands-on. Read it online for a better experience

Lets get started.

What is Apache Airflow?

Apache Airflow is an open source application that helps build and manage complex workflows and data pipelines. The pipeline we discussed in the dataset edition.

Also, Apache Airflow 3 is total redesign that expands its capabilities to support complex AI, ML, and near real-time data workloads.

Key Insight: 80,000 organizations use Airflow, with over 30% of users running MLOps workloads and 10% using it for GenAI workflows

As Devops engineers, understand Airflow's infrastructure foundations can help in design and operate data & ML pipelines in actual projects. So we will look at just enough core basics for you to get started.

Quick Airflow Primer

As you know, our data pipeline is a series of steps to collect data from different sources, process it, and then store it in a storage. These steps include collecting, cleaning, transforming, filtering, and storing data. We call this the data pipeline (ETL).

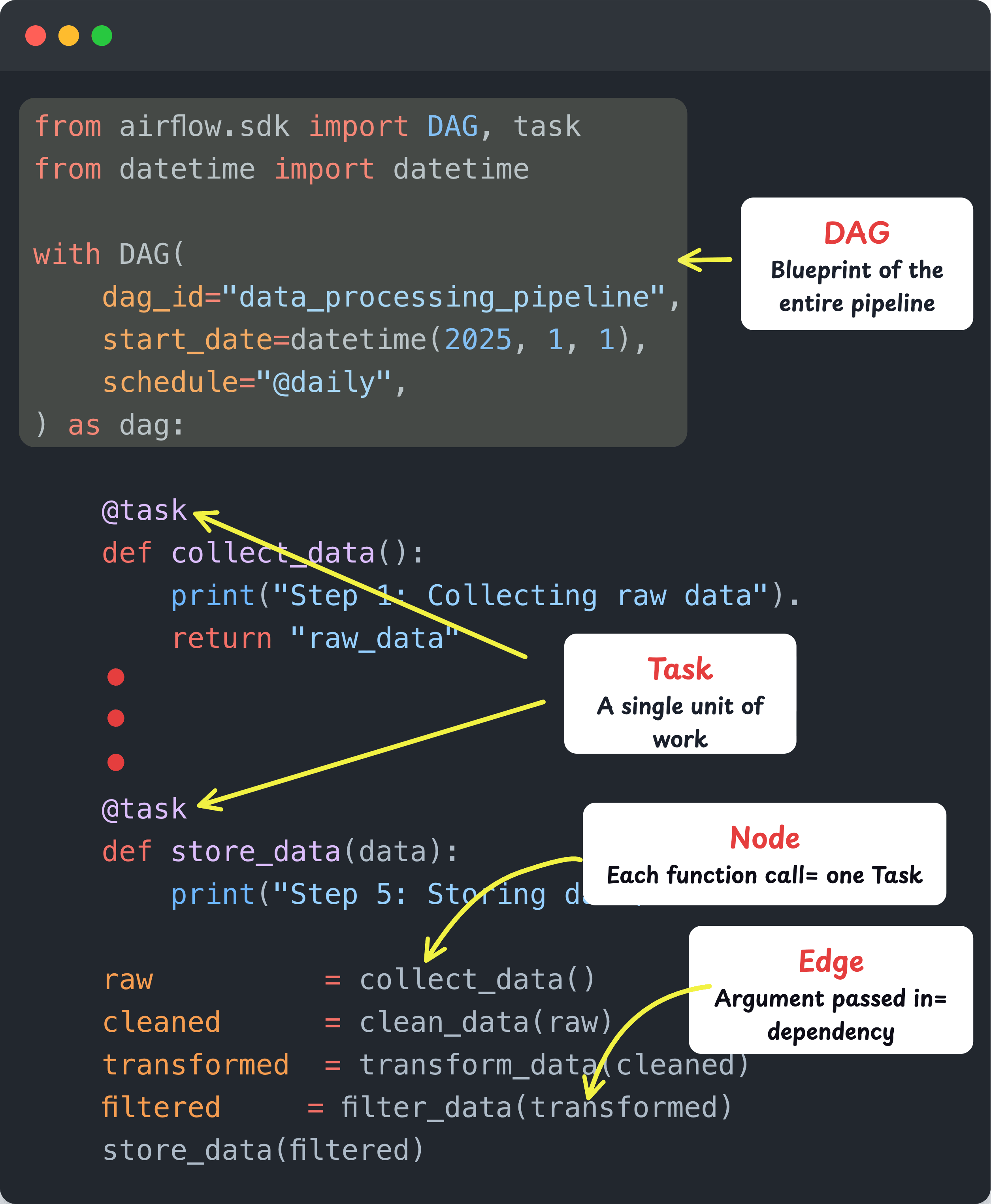

In the data pipeline, each individual step (collecting, cleaning etc) is called a Task. A Task is simply a single unit of work that Airflow executes.

Now, these tasks don't run individually. They need to run in a specific order. Why? Well, the logic is simple. You cant clean data before collecting it, and you can't store data before transforming it.

This is where a DAG (Directed Acyclic Graph) comes in. It is a foundational concept in Data engineering and AI/ML workflows. It is used to define how tasks depend on each other and in what order they should run.

Directed Acyclic Graph (DAG)

A DAG is basically a definition of your entire pipeline written in Python. It describes all the tasks and the order in which they should run. Think of it as the blueprint of your data pipeline . Here is what DAG means.

Directed: Tasks flow in one direction (collect, clean, transform and then store)

Acyclic: No cycles exist. Once a task is completed, the workflow does not go back to a previous step.

Graph: The pipeline is represented as a graph. Each task is a node and each dependency is an edge. This defines how tasks are connected and which task runs after which.

So in simple terms, a DAG is your pipeline, a Task is each step inside it.

If you have worked with CI/CD pipelines, this is exactly the same concept. One failure stops everything downstream. Airflow applies that exact model to data pipelines.

The following image illustrates how a sample DAG is structured in code. It shows the DAG definition, Tasks, Nodes, and Edges. This is the actual code you write in Airflow to build any pipeline.

📌 DevOps Insight

Terraform already uses this concept. It internally builds a resource dependency graph (also a DAG) before running terraform apply. That is how it knows to create the VPC before the subnet.

Airflow Executors

So where does this Python code (DAG) actually run?

That is what the Airflow Executor decides. As the name suggests, the Executor determines how and where your DAG gets executed.

Just like Jenkins or GitHub Actions uses agents/runners to execute CI/CD pipelines, Airflow uses Executors. There are different types of Executors, and you choose one based on where you are running Airflow and how you want to execute your DAGs.

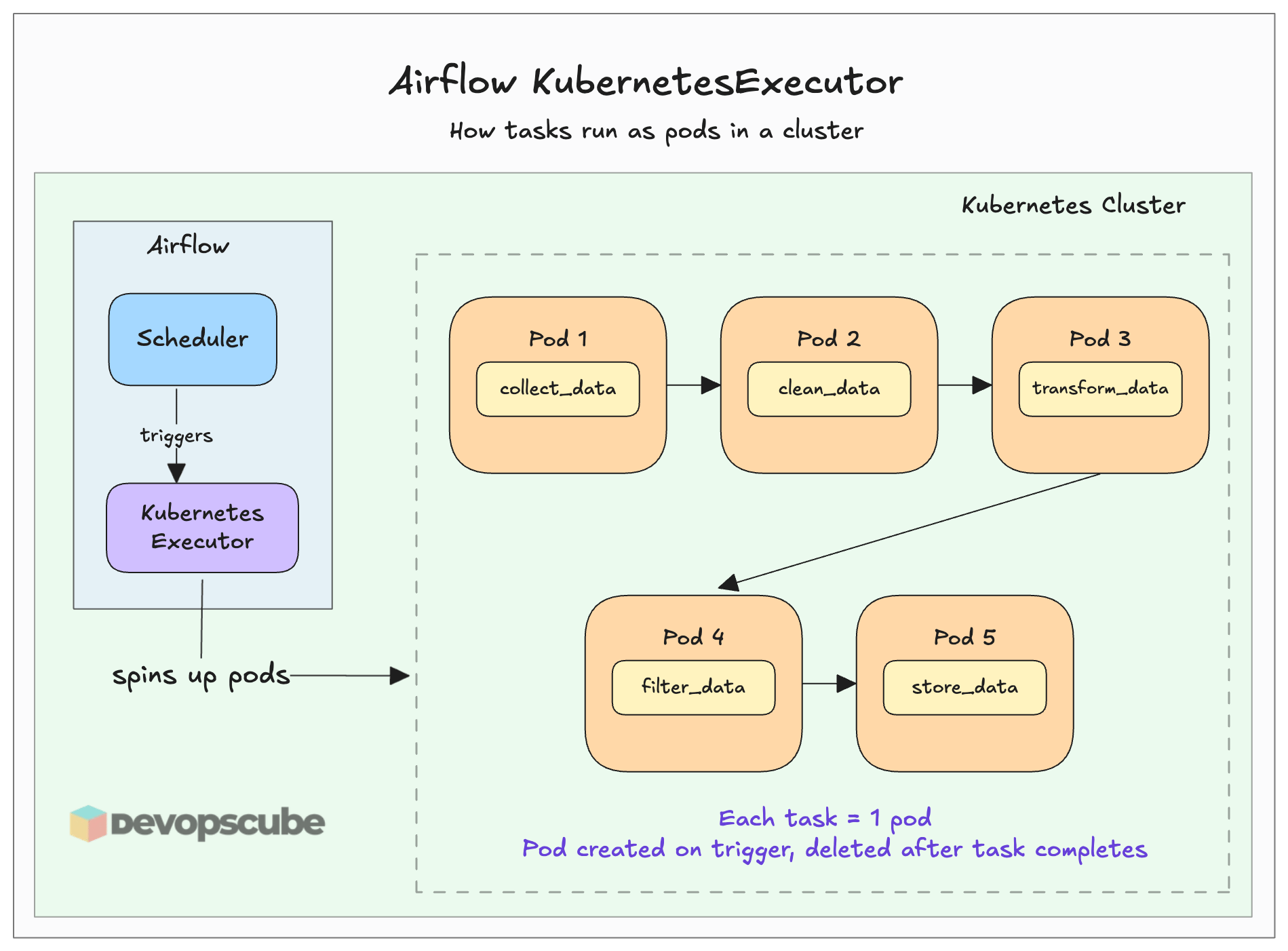

In our case, we will use the KubernetesExecutor. This executor spins up a pod in the Kubernetes cluster for every task in the DAG when you trigger the pipeline. It is similar to how you select an agent or runner to execute your CI/CD job in Jenkins or GitHub Actions.

Think of it as the dedicated environment where your DAG tasks actually run. The following image illustrates it better.

How Airflow, DVC, and S3 Work Together

Now that you have some basics, let’s look at the Airflow architecture and understand how it fits into the workflow from the EKS and S3 perspective.

Here is how it works.

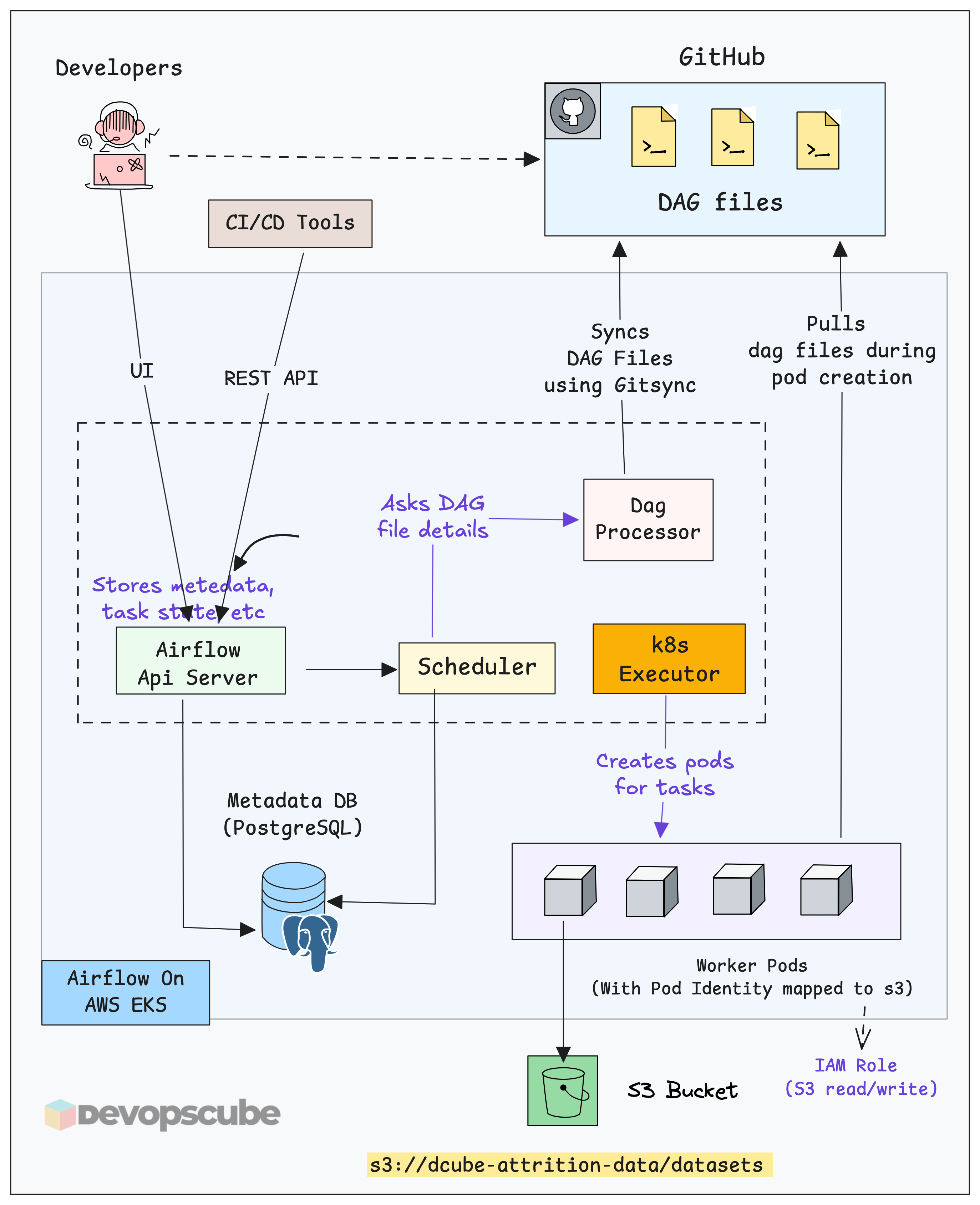

Developers push DAG files to GitHub through CI/CD. The entire pipeline code (including DVC tasks) lives in Git.

GitSync keeps Airflow in sync with GitHub. The DAG Processor runs a GitSync sidecar that continuously watches the repo. When a new DAG is pushed, Airflow picks it up automatically.

The Scheduler reads the DAG and decides what tasks should run and when.

The Kubernetes Executor creates a separate pod for the task. These are short-lived pods. Once the task is executed, it gets deleted.

Worker pods execute the data-related tasks defined in the DAG.

Each worker pod uses Pod Identity mapped to an IAM Role. This allows secure access to S3 without storing any credentials.

After the final dataset is created, worker pods push the data to S3 using DVC. For example, datasets are stored in

s3://dcube-attrition-data/datasets/.After pushing the data, DVC updates the

.dvcfile. This pointer file is then committed and pushed back to GitHub.

Airflow Worker Pod DVC Configuration.

On your local machine, DVC just works. You have Git installed, AWS credentials in ~/.aws, and DVC available in your virtual environment.

However, the default Airflow worker pod on Kubernetes does not have any of these by default. So, we need to create a custom Docker image with the required tools. This is similar to how you configure a CI/CD runner for specific tasks.

The custom image should include:

DVC installed and baked into the Airflow Docker image, along with your pipeline dependencies.

Git installed and configured to run

git add,git commit, andgit pushfrom inside the DAG.

You can find the custom Dockerfile in the MLOps repository.

platform-tools/airflow/DockerfileI have already pushed the image to Docker Hub and used it in the DAG. For testing, you can use this image directly. You don’t need to rebuild it again.

The DVC Pipeline DAG (What It Does)

You can find the DAG we are going to use in the repo at the following path.

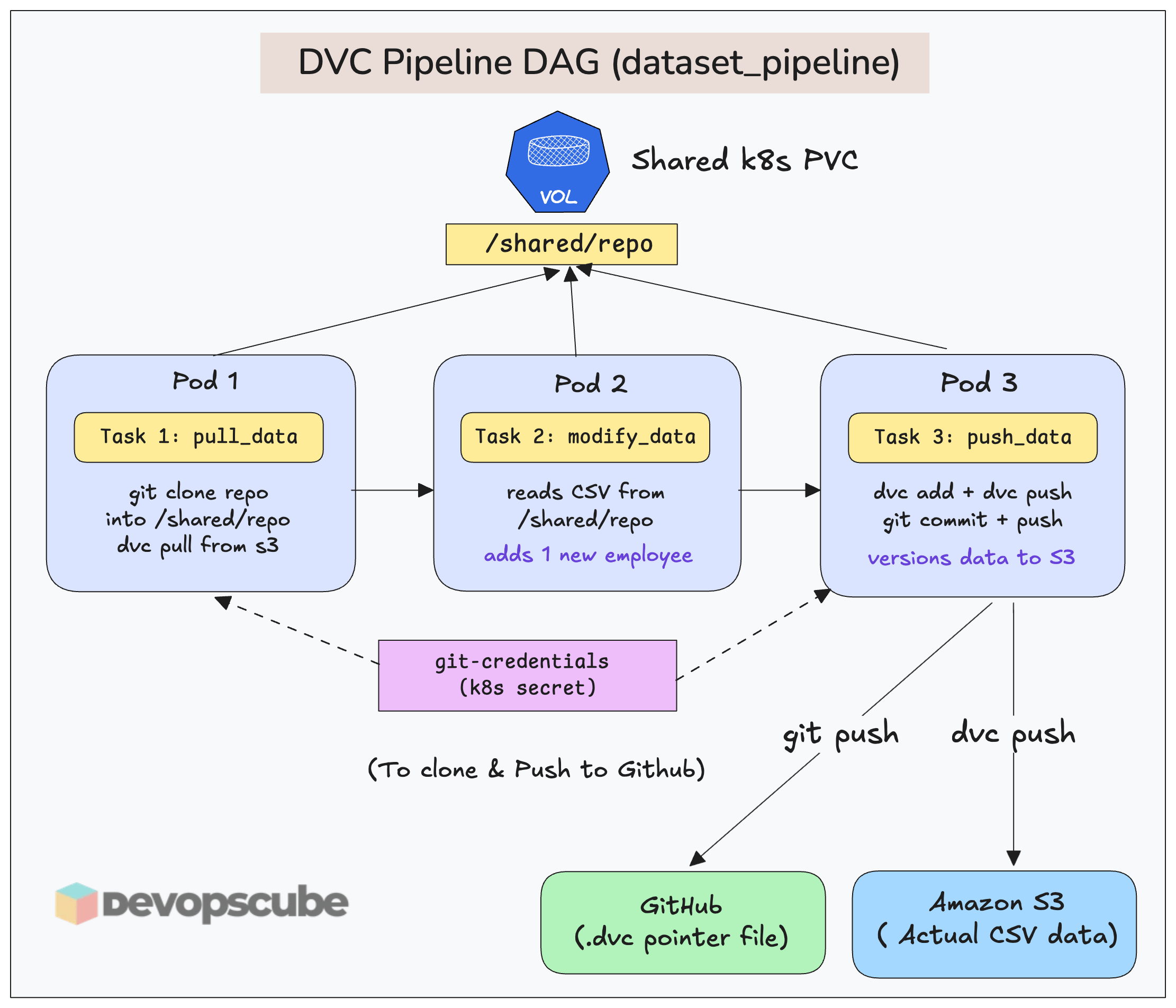

platform-tools/airflow/dags/dvc-pipeline.pyThe following image illustrates the DAG workflow.

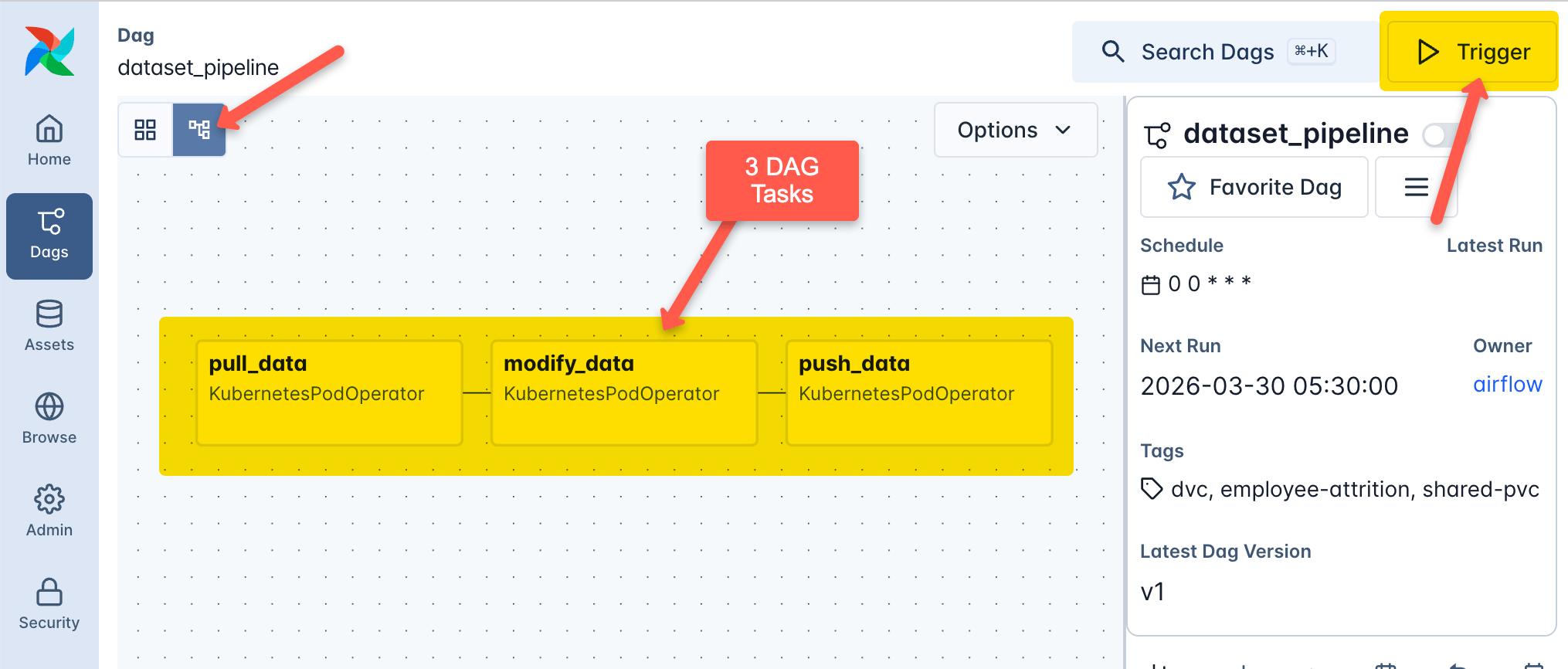

Here is what this DAG does.

It has three tasks, each running in its own pod using KubernetesPodOperator with the custom

airflow-dvc-worker:v2.0.0image (prebaked with DVC, Git, and dependencies). All pods share the same PVC (airflow-shared-pvc) mounted at/shared, so data flows between tasks.Task 1 (

pull_data) clones the GitHub repo into/shared/repousing credentials from thegit-credentialsKubernetes secret. It then runsdvc pullto fetch the current version ofemployee_attrition.csvfrom S3 into the workspace.Task 2 (

modify_data) reads the CSV from/shared/repo, appends one new randomly generated employee record, and writes the updated file back to the same path on the shared PVC.Task 3 (push_data) takes the updated CSV, runs

dvc addto track it, anddvc pushto upload it to S3. It then stages changes, commits the.dvcfile and pushes the commit to Github.

📌 Key Insight

The idea of this DAG is to understand how a DAG works and how Airflow tasks use DVC for data versioning.

In real projects, instead of pulling data using DVC in Task 1, you will have a series of tasks that collect data and run ETL jobs (as we learned in edition 1). Only the final DVC step remains the same as what we are doing here.

Pull changes From MLOPS Repo

Pull changes from the upstream to get the latest updates from the MLOPS repo. Check out this guide to learn how to keep your fork updated.

Prerequisite: One-Time DVC Setup

I assume you have followed the previous edition and pushed the employee_attrition.csv dataset to your S3 bucket using DVC. If not, please complete those steps first.

This is a prerequisite for this hands-on guide because the first task in the DAG uses dvc pull to fetch the dataset from S3.

📌 Key Insight

In real projects, setting up DVC configuration in the repository is a one-time task done by the Data, DevOps or Platform engineer on their local machine. Once the DVC configs are in place, you can track and version data from any tool.

Update the DAG Repo & Push to Github

Next you need to update the DAG script with your repository details.

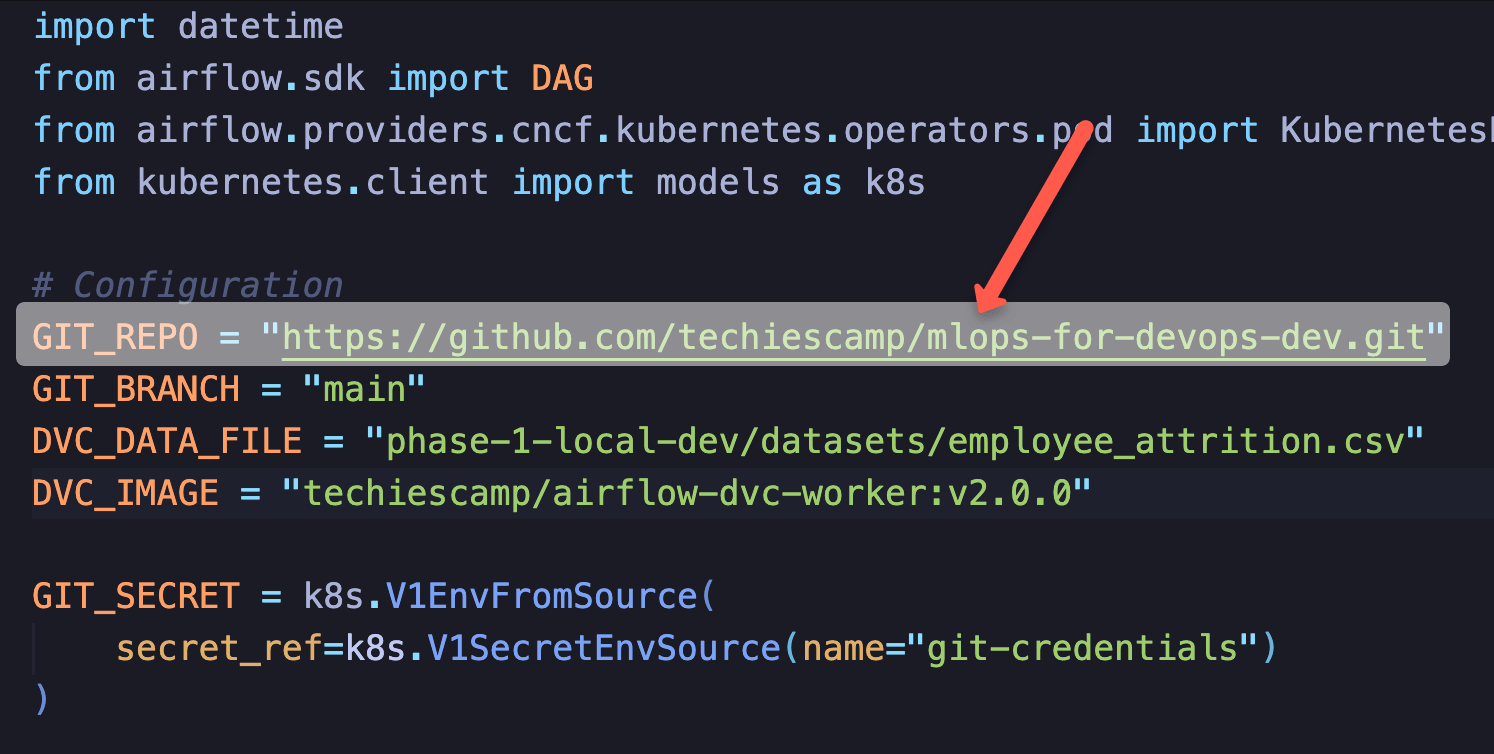

The dvc-pipeline.py file located in the phase-2-enterprise-setup/airflow/dags directory. Replace the GIT_REPO value with your repository details as shown below.

Now push the code to Github with the changes.

$ git push origin mainSetting Up the Airflow DVC Pipeline on EKS

📌 Important Note

I am demonstrating this using an EKS setup. The same workflow works on any Kubernetes cluster. You just need to adjust the configurations accordingly.

Lets get started.

Step 1: Deploy a EKS Cluster

Deploy an EKS cluster with the Pod Identity add-on enabled.

I have included an eksctl cluster configuration file in the repository. You only need to update the VPC, Subnets, region and the publicKeyName details as per your environment. Everything else should work as is.

After updating the values, deploy the cluster using the following command.

$ cd platform-tools/eks

$ eksctl create cluster -f mlops-cluster.yamlStep 2: Enable AWS access for Airflow use S3

Once the cluster is up and running, execute the script.sh file in the eks folder. This script creates the S3 IAM role (Airflow-DVC-S3-Role) and associates the service account with Pod Identity. It allows the Airflow worker pods to write to the configured s3 bucket.

📌 Important Note: Update the AWS_REGION and DVC_BUCKET parameters in the script.sh with your values before running it.

$ chmod +x script.sh

$ ./script.sh createNow the cluster is set up with all the required permissions for Airflow to work with the S3 bucket.

Step 3: Create Secret of Git Credentials

As discussed earlier, the Airflow worker needs to clone and push to the MLOps repository to manage DVC. For this, we create a Kubernetes Secret with GitHub credentials.

In the DAG (using KubernetesPodOperator), we mount this Secret into the worker pod as environment variables.

📌 Note: You can get the token from GitHub —> Settings —> Developer settings —> Personal access tokens

Run the following command by replacing the values with your GitHub username and token.

kubectl -n airflow create secret generic git-credentials \

--from-literal=GIT_SYNC_USERNAME=<your-github-username> \

--from-literal=GIT_SYNC_PASSWORD=<your-github-token> \

--from-literal=GITSYNC_USERNAME=<your-github-username> \

--from-literal=GITSYNC_PASSWORD=<your-github-token>Step 4: Create a shared PVC for DAG Tasks

As discussed earlier, we need a Persistent Volume that can be shared between task pods to pass data across pipeline steps. In this setup, all tasks use a shared PVC to read and write the dataset.

Run the pvc.yaml manifest available in the Helm folder to create the shared persistent volume.

$ cd platform-tools/airflow/helm

$ kubectl apply -f pvc.yaml📌 Important Note

The shared PVC approach is mainly useful for learning, testing, or tightly coupled pipelines. In production, you would replace this with Amazon S3 or Amazon EFS as the shared storage layer.

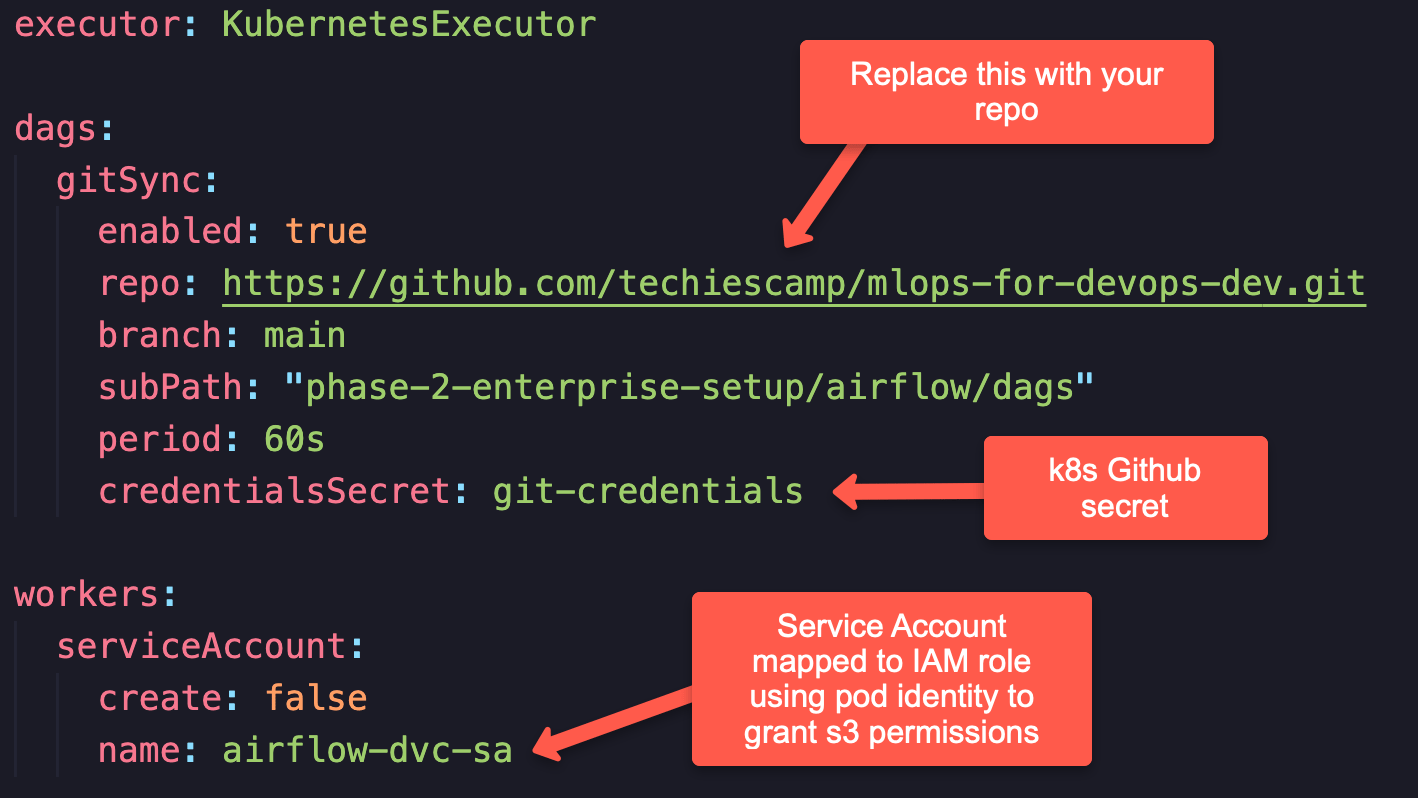

Step 5: Modify Helm Custom Values

The helm folder contains customized values custom-values.yaml) configured to use the KubernetesExecutor for Airflow.

platform-tools/airflow/helm/custom-values.yamlIt also contains gitSync configurations where we tell Airflow the folder location to sync DAGs from the repository. Here, you need to replace the existing Git repository with your repository details.

Step 6: Deploy Airflow Using Helm

Next, deploy Airflow in the cluster using Helm using the following commands.

$ cd platform-tools/airflow/helm

$ helm repo add apache-airflow https://airflow.apache.org

$ helm install airflow apache-airflow/airflow -n airflow --create-namespace -f custom-values.yamlOnce the command has run, check whether all the components are properly deployed.

$ kubectl -n airflow get poStep 7: Access Apache Airflow UI

Use the following command to port-forward Apache Airflow and access the UI

$ kubectl -n airflow port-forward svc/airflow-api-server 8080:8080Open your web browser and navigate to: http://localhost:8080. You will be prompted to log in. By default, both the username and password are admin.

📌 Note: In production, real-world projects authentication methods such as LDAP or OAuth, along with SSL/TLS is used to protect access to the Airflow web server.

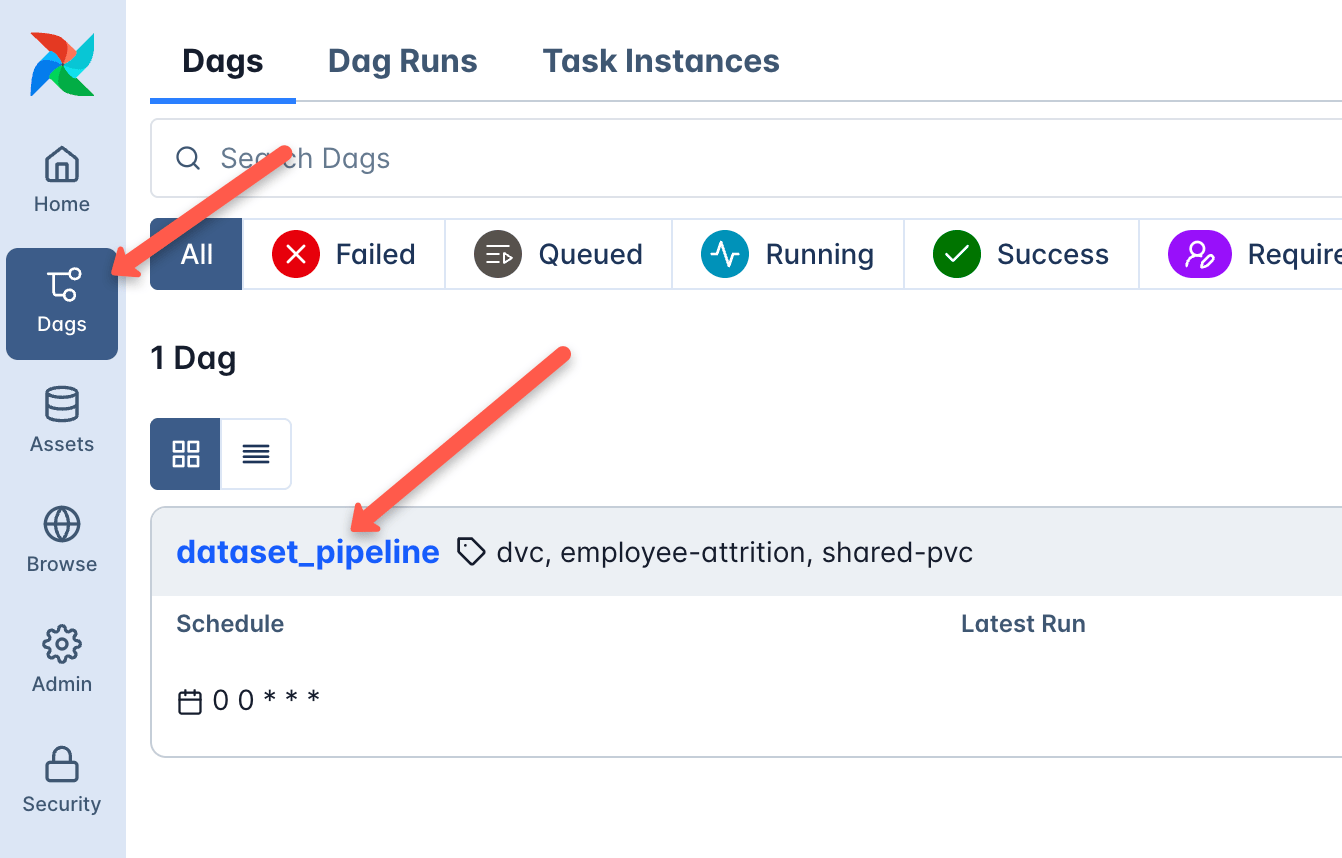

Step 8: Access the Dataset DAG

Now, go to the DAGs section in the Airflow UI. You should see the DAG listed there.

Since we configured gitSync with the MLOps repository through Helm, Airflow automatically syncs the dataset_pipeline DAG from the repository as shown below.

If you click on the DAG and open the Graph View, you can see the defined tasks in order, as shown below.

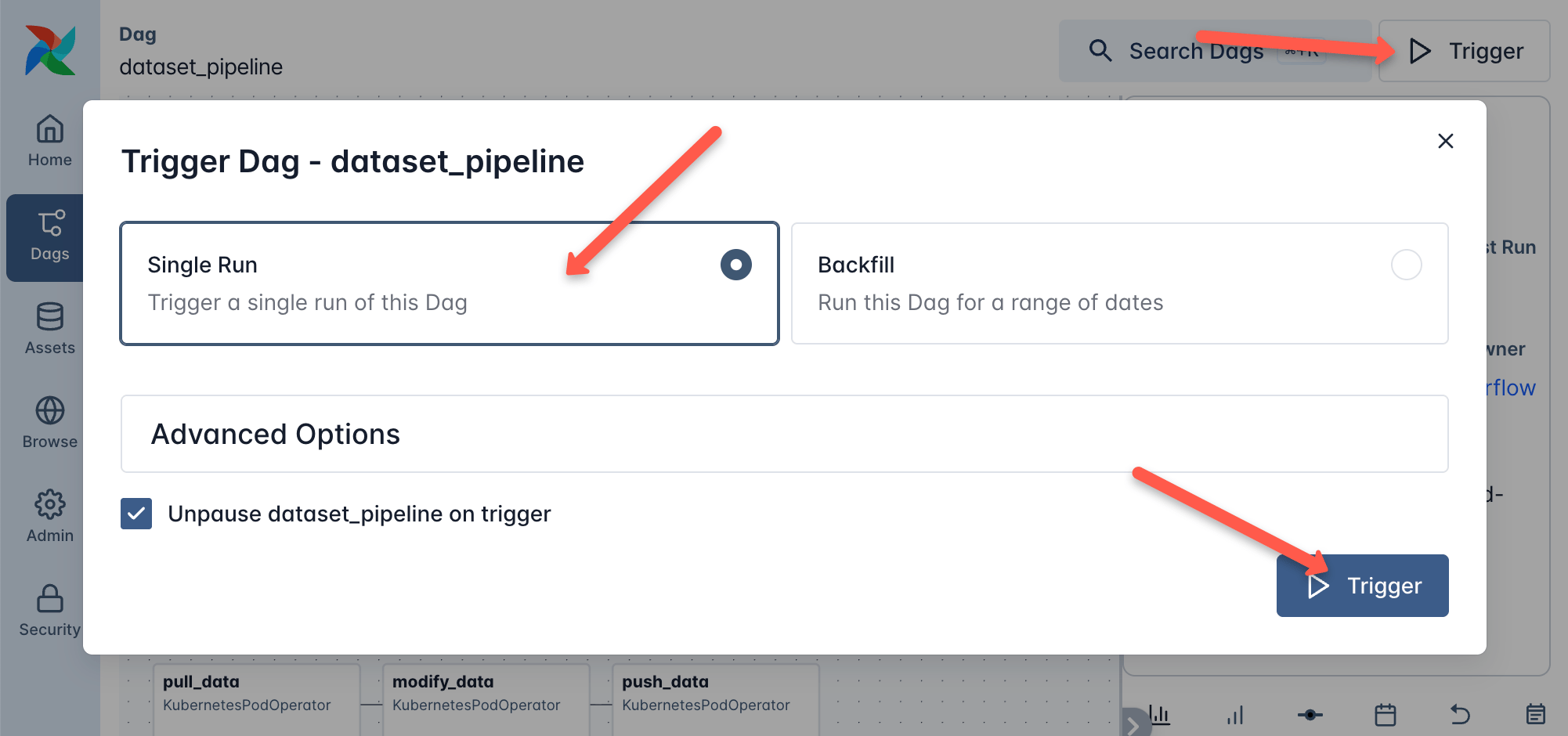

Step 9: Trigger the DAG

Now, let's trigger the DAG using the Trigger button and select the single run option, and then click the Trigger button as shown below.

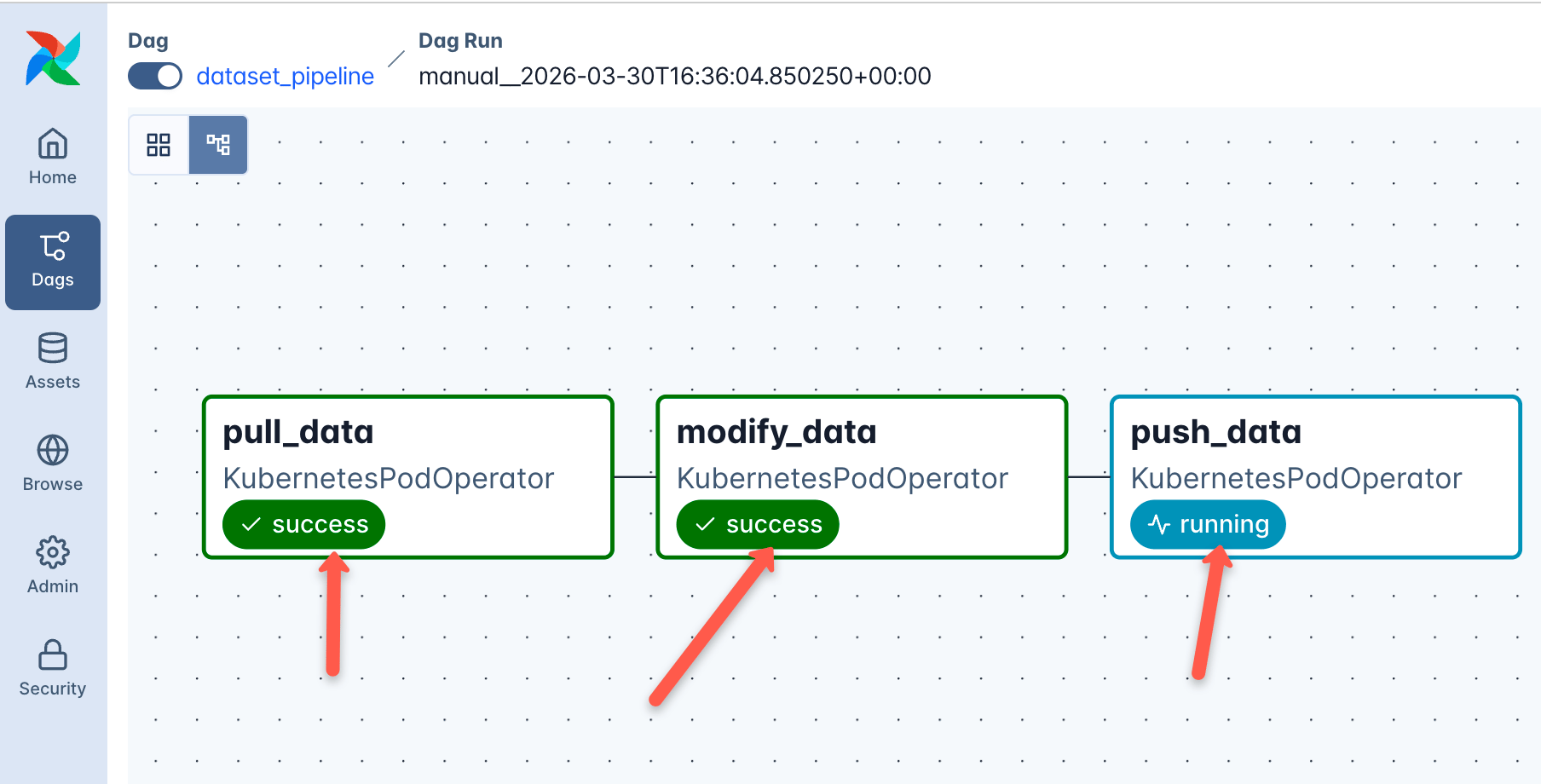

It will take a few minutes for the entire pipeline to run. You can check the status of each task, as shown below.

📌 Production DAG Triggering:

In real-world data and MLOps pipelines, DAGs are not always triggered the same way. There are three common patterns. Scheduled (cron-based), Event-driven (Eg,. on data arrival) and API-triggered for ad-hoc runs from CI/CD pipelines via the Airflow REST API

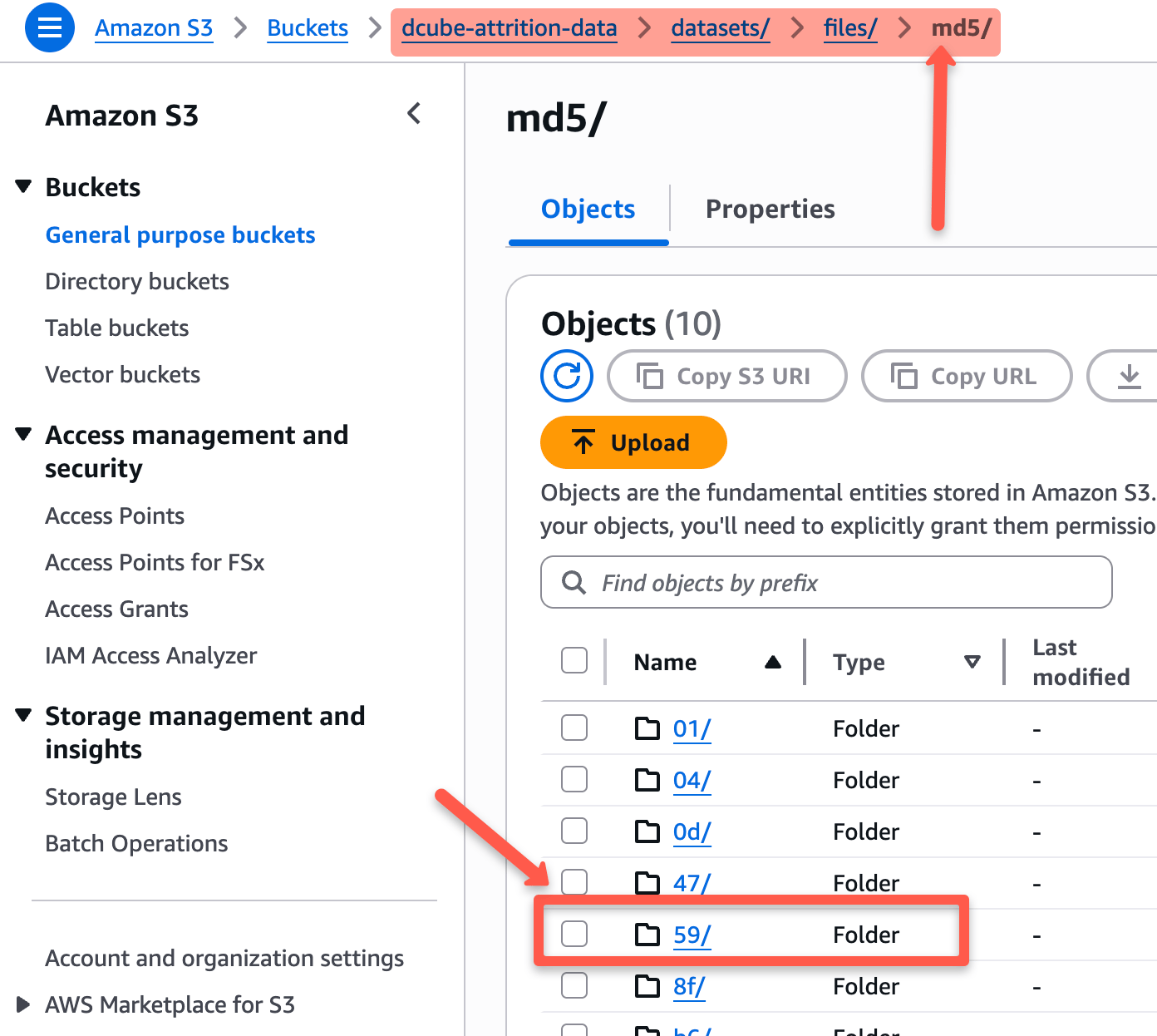

Step 10: Verify DVC Push to s3

Airflow Task 3 commits and pushes the updated .dvc pointer file to GitHub. So before running any DVC checks locally, you need to run git pull. Otherwise, your local .dvc file will be outdated and still point to the previous version instead of the one just pushed by Airflow.

$ git pull origin mainNow check what version DVC thinks should be in S3. You should see the md5 details in the output.

$ cat phase-1-local-dev/datasets/employee_attrition.csv.dvc

outs:

- md5: 5911ebf0fa91033fb323989b7c6d7fbc

size: 9983309

In S3, DVC stores data using the MD5 hash. You will see folders named with the first two characters of the hash, and inside them, files named with the remaining part of the hash.

Now, any data scientist who wants to work with the latest version of the dataset can pull the latest changes from Git and use DVC to fetch the updated data. This is where the actual ML development begins, using the versioned dataset.

Cleanup the Setup

When you are done with the hands-on, tear down the EKS cluster to avoid unexpected AWS charges.

# Remove Pod Identity association and IAM role first

$ ./script.sh delete

# Delete the EKS cluster (takes 10-15 minutes)

$ eksctl delete cluster -f mlops-cluster.yamlThat's a Wrap!

You now have a fully automated data versioning pipeline running on Kubernetes.

Any data scientist can run git checkout <commit> + dvc pull and reproduce the exact dataset that was used for any training run months or years later.

What's Coming Next ?

We have covered data ingestion, ETL, data versioning, and automation. But there is still one important piece missing before we get to model training.

In the next edition, we will look at the Feature Store. It is one of the critical components in a production MLOps pipeline.