👋 Hi! I’m Bibin Wilson. Every Saturday, I publish a deep-dive MLOps edition to help you upskill step by step. If someone forwarded this email to you, you can subscribe here so you never miss an update.

✉️ In Today’s MLOps Edition

In this edition, we will build out final working model. We will look at the following.

What is model training?

Training and creating the model artifact.

How to evaluate the model?

Cross-validation (checking if the model is stable)

Hyperparameter tuning (finding the best settings for the model)

Testing the model for real prediction

A production use case of Cloudflare (Training 1 million models)

Note: Some ML terms mentioned may feel overwhelming at first. Thats completely okay. You dont need to know the internals. The important thing is to understand the overall process by doing hands-on.

📦 MLOps Code Repository

All hands-on code for the entire MLOps series will be pushed to the DevOps to MLOps GitHub repository. We will refer to the code in this repository in every edition.

If you are using the repository, please give it a star.

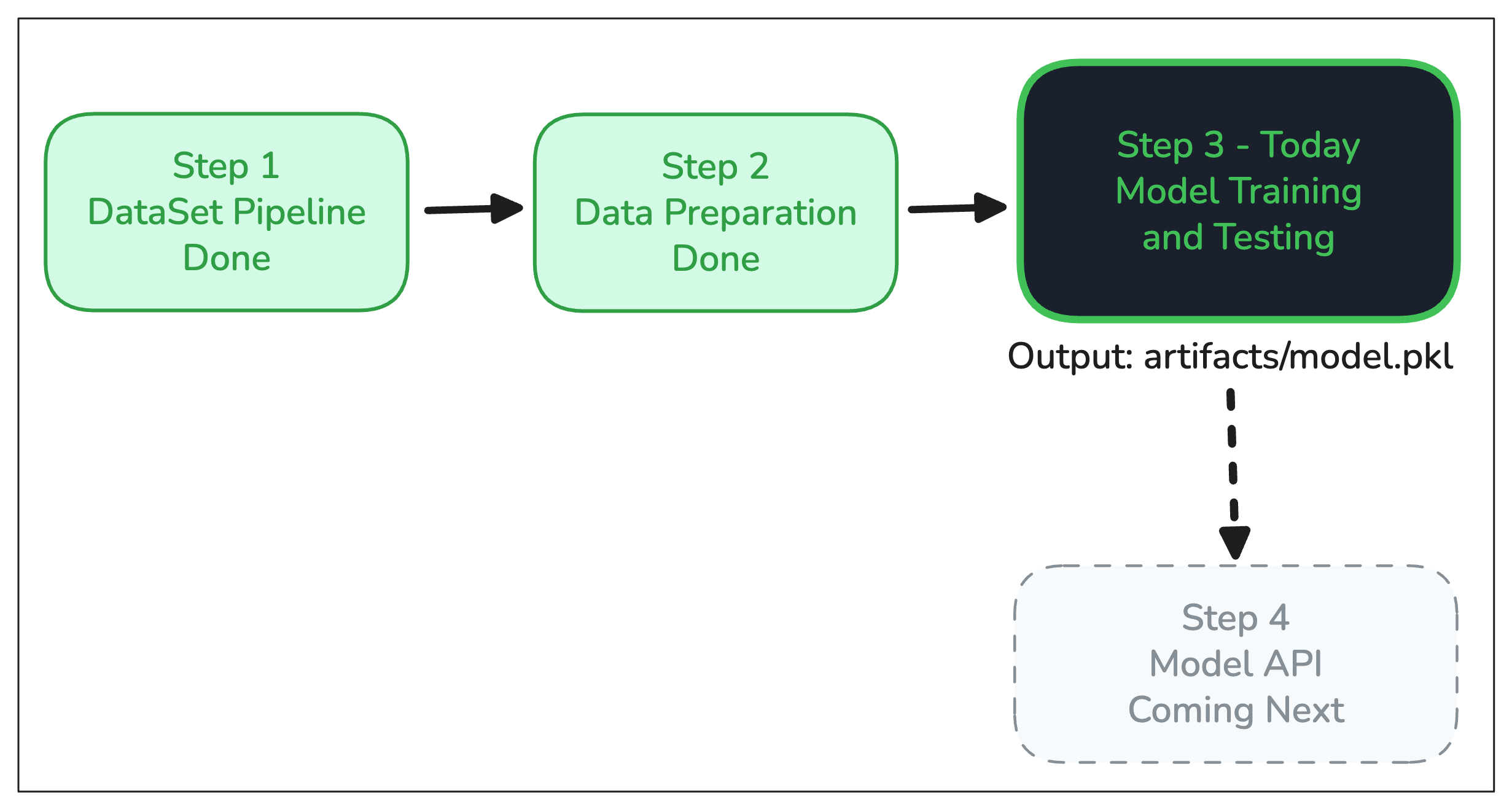

Where We Are in the Series

Before we dive in, here is where Step 3 sits inside Phase 1.

Missed the previous ones?

In the last edition, we looked at the data preparation stages. The final output was a scaled, balanced dataset split into train.csv and test.csv that is ideal for training our model.

Today, we use this dataset to train a model that predicts whether an employee will stay or leave based on the employee information provided.

First, Let's Clarify What a "Model" Actually Is

Before we get into training, let's clear up something that confuses a lot of people.

The word "model" gets used in two different ways, and that is where the confusion comes from.

Before training, a "model" is just a machine learning algorithm. It is a mathematical blueprint with no knowledge at all. It knows how to learn, but it has not learned anything yet. Same algorithm can be used for different ML use cases.

Once the training is done, it is the same algorithm you started with, but it has learned values from our employee attrition dataset.

So when we say a model has “learned,” it basically means it adjusted its internal settings called parameters so it can make better predictions on data.

So, when someone says "we trained the model," they mean, we ran data through the algorithm until it figured out the right internal settings to make good predictions. The output of that process is what we call the trained model.

It will make more sense once you are done with the hands on labs.

What does “Training” mean in ML?

In simple terms, training is the process of teaching the machine how to make predictions.

It is similar to how we humans learn.

For example, lets say you are teaching a child to recognize dogs. You don't write rules like "if it has four legs, a tail, and barks, it's a dog." Instead, you show the child many examples of dogs, and over time, they learn to recognize dogs of all shapes and sizes.

Similarly, in machine learning, we feed the algorithm many examples (data), and it learns the patterns on its own.

In our use case, we feed the algorithm with historical data of past employees with known outcomes (who stayed, who left). The model then adjusts its internal settings to fit those patterns. Once done, those settings are locked in. That is your trained model.

💡 Key Insight

Because we already know the outcome for past employees (stayed or left), this is called a supervised learning problem. The algorithm learns by comparing its predictions against known answers and adjusting until it gets better

Choosing an Algorithm For the Model

Data scientists need to choose an algorithm for the model.

So who creates these algorithms? Researchers and academics. Logistic Regression was developed by statisticians in the 1950s. XGBoost came from a research paper by Tianqi Chen in 2016.

These algorithms are then packaged into open source libraries. We are using scikit-learn python library for our project. It bundles hundreds of algorithms.

This means, a Data Scientist does not write an algorithm from scratch. They pick one from a library, configure it, and validate it works well on their data.

It is the same way a DevOps engineer does not write a load balancer from scratch. You pick Nginx or Envoy proxy built by others and configure them for your use case.

For our project, we picked Logistic Regression Algorithm. Here is why.

Our dataset has a mix of numbers (years at company, age) and categories (job level, department). Logistic Regression handles that mix without much extra work.

Important Note

Algorithm selection is the Data Scientist's decision. It involves a structured, evidence-based process. They evaluate interpretability, performance, and complexity.

As a DevOps or Platform engineer, your job is not to pick the algorithm. Your job is to understand what artifact it produces and how to deploy and version it

Project Structure

If you have been following along, you already have the repository cloned from Step 2. Pull the latest changes to get the new model training files.

cd mlops-for-devops/phase-1-local-dev

git pull origin mainInside the src/ folder, you will now see two new folders, model_training and model_testing alongside data_preparation.

src/

├── config/

│ └── paths.py

├── data_preparation/

│ ├── 01_ingestion.py

│ ├── 02_validation.py

│ ├── 03_eda.py

│ ├── 04_cleaning.py

│ ├── 05_feature_engg.py

│ └── 06_preprocessing.py

├── model_training/

│ ├── 01_training.py

│ ├── 02_evaluation.py

│ ├── 03_cross_validation.py

│ └── 04_tuning.py

└── model_testing/

└── predict.pyModel Development Stages

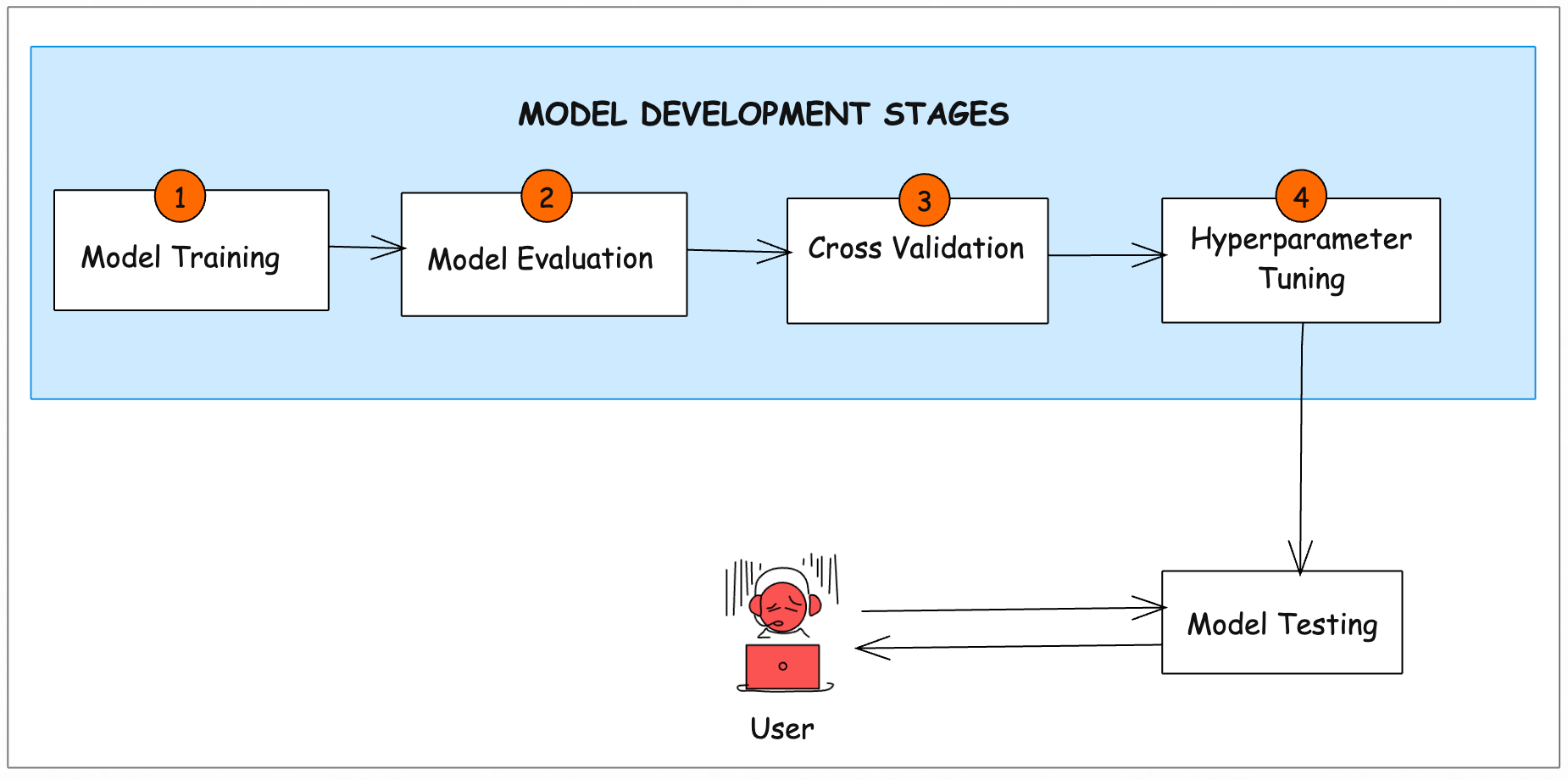

The model development module runs four stages in sequence. Each stage takes the output of the previous one. Here is how it looks end to end.

Now, lets understand each stage by practically executing the training scripts.

1. Training the Model (01_training.py)

The training.py uses scikit-learn library.

This script loads train.csv and test.csv from the processed folder, builds a scikit-learn Pipeline, and trains a Logistic Regression model on the employee attrition data.

In this context “builds a pipeline” means it sets up a sequence of steps inside the code itself.

There is a lot of ML explanations in this step. However it is out of scope for our learning. In short, here is what it does.

Loads the training data

Builds a pipeline that prepares the data then trains a model

Trains the model using Logistic Regression

Saves the trained model to disk

Lets execute the training script.

python -m model_training.01_trainingOnce executed, the script generates the model artifact (model.pkl) in the artifacts folder. That is our trained model.

You might ask why .pkl? pkl stands for pickle.

When you save a trained model as a .pkl file, you are freezing its entire state. All the learned patterns, all the internal settings (parameters), everything.

Real World Context: How Big Can Models Get?

Our model.pkl is tiny a few kilobytes. But model sizes vary enormously depending on the type.

For example, a Large LLM Llama range from 40 GB to 140 GB. A Frontier model like GPT-4 can be 500 GB to several TB.

This is why serving infrastructure matters so much. Our model.pkl loads in milliseconds from a small container. However, a LLM needs multiple high-memory GPUs just to load. The MLOps challenge scales with the model size.

2. Evaluating the Model (02_evaluation.py)

Training is the easy part. However, to know if the model is predicting as expected, we need to evaluate it. We need to check whether the model predicts “leave” or “stay” correctly. That is what this script does.

It runs the trained model against test.csv dataset. It is the data the model has never seen.

Lets execute the evaluation script.

python -m model_training.02_evaluationThe output will show the key evaluation metrics for our Employee Attrition model.

Here is what these key metric tells us.

Accuracy (68%): This tells us how often the model’s prediction matches the actual result overall.

Precision (65%) This measures how often the model’s prediction of “leave” was actually correct. In other words, when the model says “this person will leave,” it is right 65% of the time

Recall (71.0%) Recall tells us how many of the employees who actually left were correctly identified by the model. It answers the question: Out of all real “left” cases, how many did the model find?

Since our goal is to catch at-risk employees early, recall is the most important metric for this use case. The model correctly identified 71% of them.

The following image shows the four possible outcomes. False Negatives are the costliest, which is exactly why Recall is our primary metric.

3. Cross-Validation (03_cross_validation.py)

The model scored well on one train/test split. How do we know if the model is stable and not overfitting.

Overfitting means the model learns the training data too exactly, so it cannot make good predictions on new data.

Cross-validation checks this. This script splits the dataset 5 different ways and trains and tests the model on each split. If the scores are consistent across all 5, the model is stable and it is learning real patterns, not memorizing specific rows.

Lets execute it.

$ python -m model_training.03_cross_validation

Strat cv score: 70.64252696071239The cross‑validation score we obtained (~70%) is very close to our earlier recall metric (~71%), which shows that the model is stable and not overfitting.

Now that the model performs consistently, we can move to the next step in finding the best settings through hyperparameter tuning.

4. Hyperparameter Tuning (04_tuning.py)

Every algorithm has settings you can adjust. These are called hyperparameters.

Hyperparameters are chosen before training and set by the person building the model (data scientists or ML engineers).

Proper tuning of these parameters helps the model avoid being too simple (missing patterns) or too fixed (memorizing training data), and makes it perform better on new, unseen data.

In this stage, data scientists or ML engineers try different settings and search for a better configuration to improve the model.

In the tuning script, we use a tool called GridSearchCV. It tests all combinations and picks the best one based on Recall.

Lets execute it.

python -m model_training.04_tuning The output shows the best parameter is C=1, solver='lbfgs', max_iter=1000. As a DevOps engineer, you don’t need to understand these details to run or deploy the model.

Overall, the tuning confirms that the model artifacts/model.pkl is well-optimized and ready for deployment.

The tuning script also saves a second file artifacts/metrics.json. It contains the final evaluation scores for the tuned model (Accuracy & Recall).

In production, this file will be used by CI/CD pipeline. If Recall drops below a set threshold on a new training run, the pipeline should fail and block the new model from being deployed.

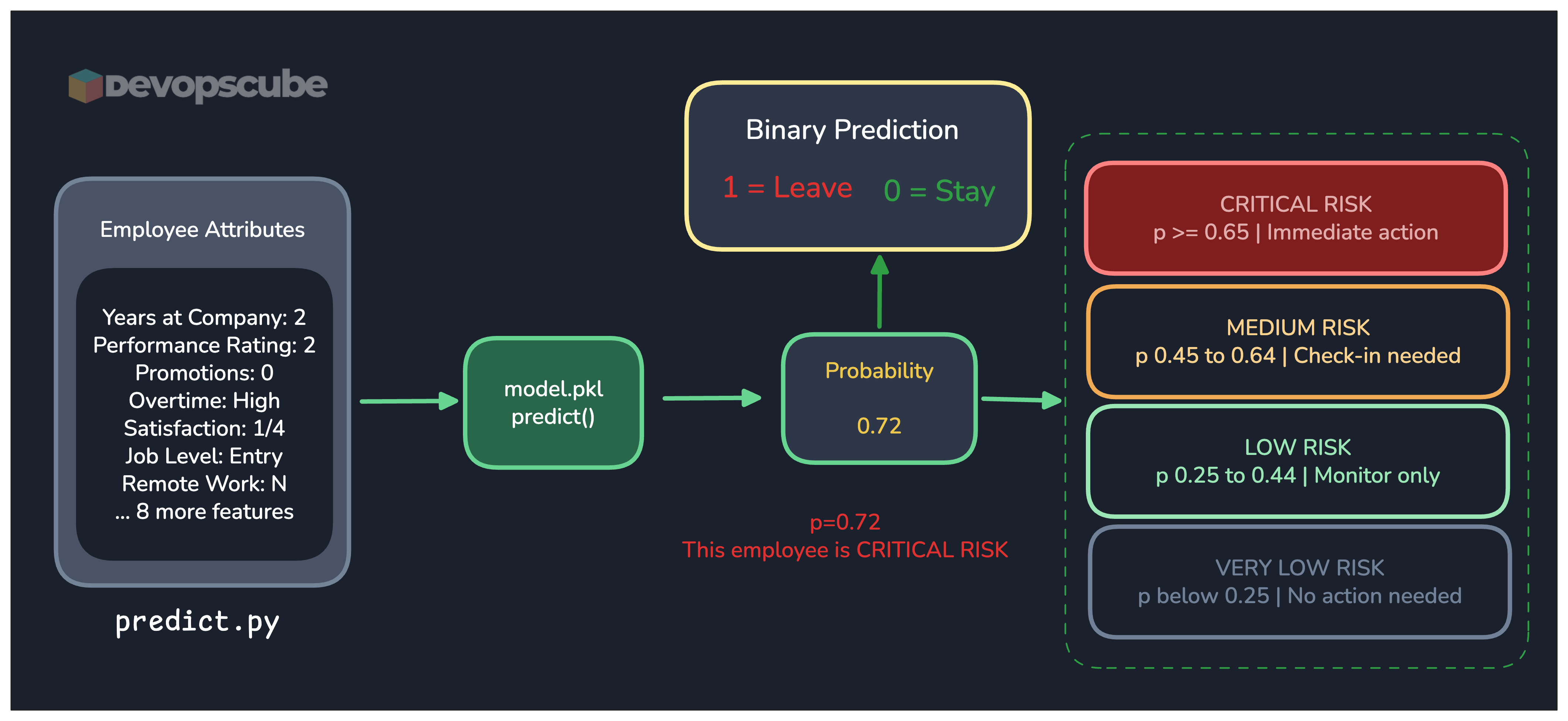

The following image gives you a mental model of what we have done till now.

Testing the Model (Inferencing)

Now we have a trained model that can predict if an employee will stay or leave.

The next step is to use that trained model to make predictions on new, unseen data. We call this model inference.

When we feed employee details to the model, it produces a binary outcome. Meaning the prediction will be either 0 or 1.

Here's What the Output Means

1 = Employee is predicted to Leave

0 = Employee is predicted to Stay

It also gives a risk tier based on the probability score. This way you know not just whether someone will leave, but how urgently to act.

The following image gives you a mental model.

Lets run the testing script.

python -m model_testing.predictThe script will prompt you to enter 15 employee attributes one by one. It then automatically derives 4 additional features and passes all 19 to the model.

Once you enter the details in the prompt, you will see the final output based on the information you have chosen for the employee.

.

prediction value: 0

😃 Stay

⏰ Risk: Very Low (0.1471)You now have a fully trained model saved in artifacts/model.pkl. We have also successfully tested it locally.

What's Next!

We cannot use a model or give anyone this model to be used in a CLI or Python script right?

We need to give the end user (e.g. HR personnel) an app that uses this model at the backend.

So in the next edition, we will wrap model.pkl in a REST API using FastAPI. Then we use KServe on Kubernetes to serve the model.

One HTTP request in. One attrition prediction out. That is the first step in turning this local file into a real deployable service.

🧱 Production Use Case: How Cloudflare Trains ~1 Million Models Per Day

Cloudflare protects millions of websites from DDoS attacks. To do that at scale, they train a separate ML model for every customer website roughly one million models every single day.

Each model is small and fast to train. It learns what "normal" traffic looks like for that specific website, then flags anything that deviates from that baseline as a potential attack.

They retrain models once per day, in batch. They use 4 weeks of historical traffic data per website, chunked into 5-minute intervals. So each day, the previous day's traffic is added to the window and the model is retrained from scratch on that updated dataset.

The entire training and inference pipeline runs on Apache Airflow deployed on Kubernetes.

👉 Read the full Cloudflare engineering blog post