👋 Hi! I’m Bibin Wilson. In each edition, I share practical tips, guides, and the latest trends in DevOps and MLOps to make your day-to-day DevOps tasks more efficient. If someone forwarded this email to you, you can subscribe here to never miss out!

✉️ In Today’s MLOps Edition

In this edition, we take the Employee Attrition model we trained earlier and turn it into a real application running on Kubernetes.

Here is what we will cover today.

Dockerizing the inference service

Dockerizing the frontend application

Why we need KServe

Serving the model using KServe

Deploying a frontend that interacts with the KServe inference endpoint

How large models are served in production

By the end of this edition, you will understand how a trained ML model becomes a running service that applications can call.

📦 MLOps Code Repository

All hands-on code for the entire MLOps series will be pushed to the DevOps to MLOps GitHub repository. We will refer to the code in this repository in every edition.

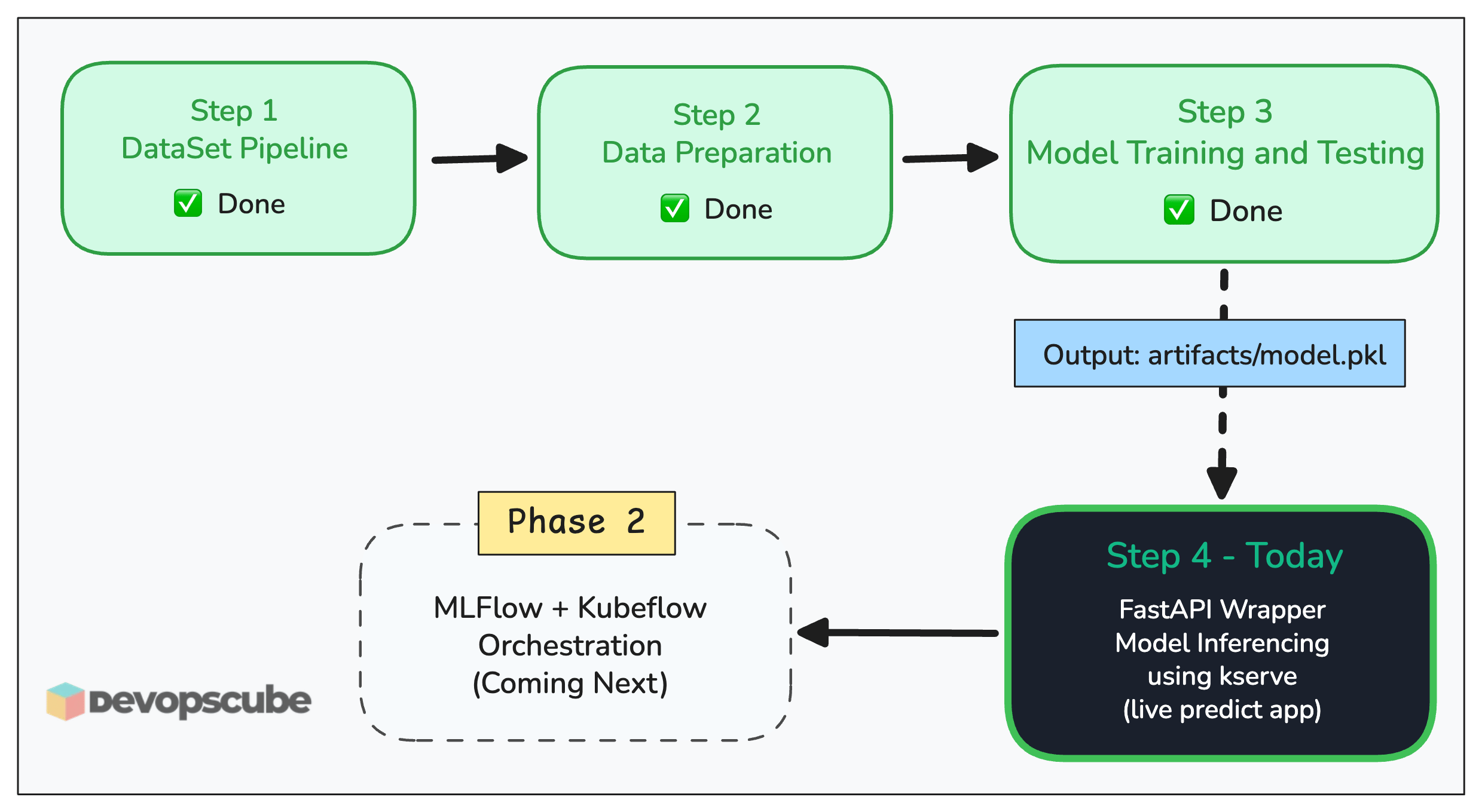

Where We Are in the Series

We are still in Phase 1, taking model.pkl all the way to a live inference endpoint on a local Kubernetes cluster.

Missed the previous editions?

In the last edition, we trained and tested the Employee Attrition model. We ran it through evaluation, cross-validation, and hyperparameter tuning.

The final output was artifacts/model.pkl.

Here is the problem. It only works if you run a Python script manually via CLI.

Have you ever wondered how a trained model actually becomes an API that applications can call?

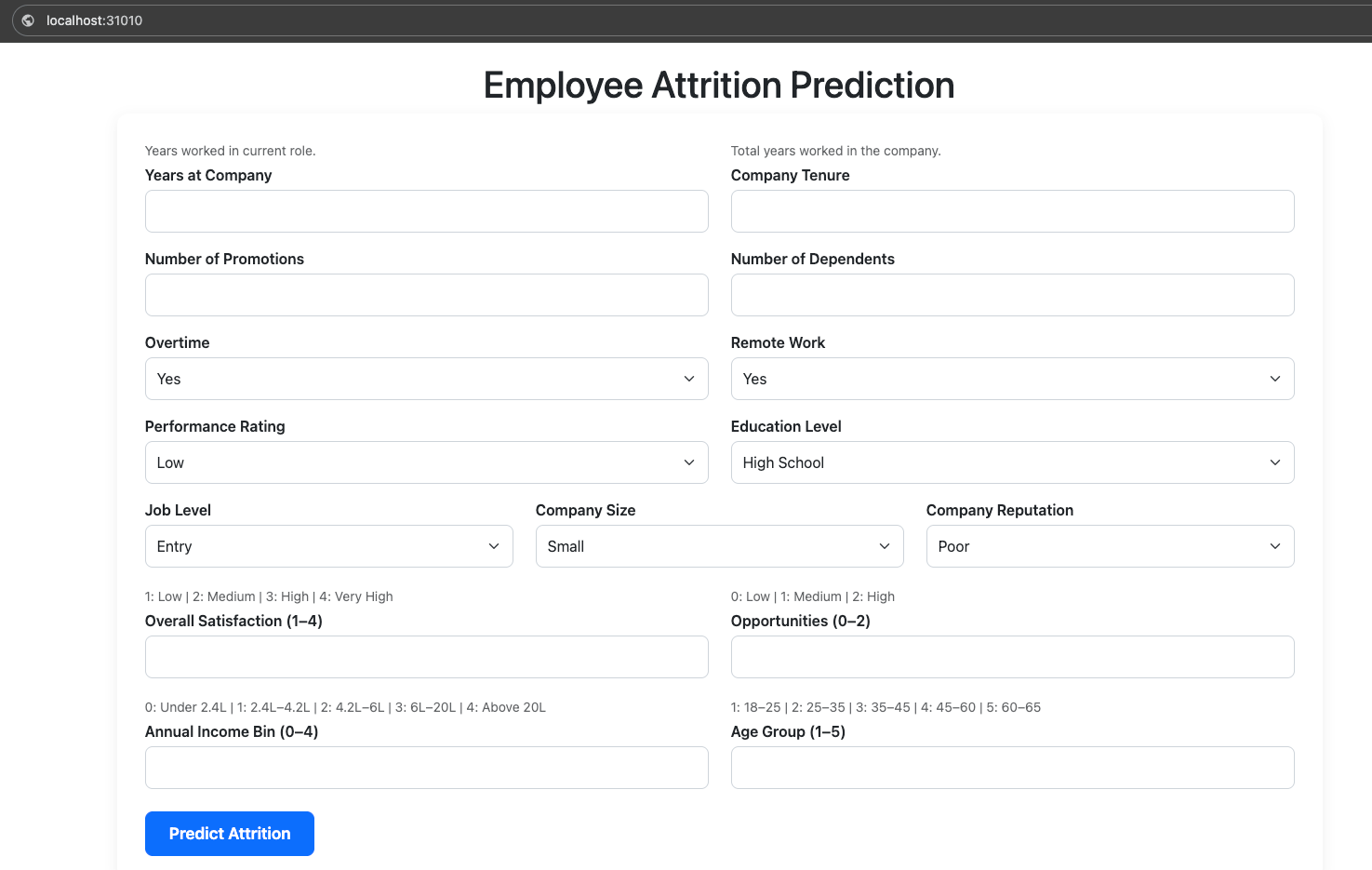

In our employee attrition use case, we need to provide an app with a frontend to HR personnel. So that they can predict whether an employee is likely to stay or leave, based on what the model returns. That means the model needs to be callable over HTTP, from a real application.

In this edition, we will turn that .pkl file into a real service that applications can call.

Prerequisites (model.pkl)

This edition assumes you have artifacts/model.pkl . If you followed along in the previous edition, you already have it.

If you are joining fresh or don't have it, grab it directly from the repo. inference/artifacts/model.pkl

Copy it into your local artifacts/ folder and you are ready to follow along.

Project Structure

Pull the latest changes to get the new app and model deployment files.

cd mlops-for-devops/phase-1-local-dev

git pull origin mainHere is our current project structure with newly added files.

Setup Architecture

Our setup contains the following.

The Flask Frontend App: Deployed as a standard Kubernetes Deployment

The Inference Deployment: Deployed via KServe

Inference API (FastAPI + model.pkl)

model.pkl is just a file on disk. On its own, it can only be called from a Python script like the predict.py script we used in previous edition. No HTTP, no JSON, no way for any other application to talk to it.

To make it callable over the network from a frontend, we need to wrap it with a service that exposes an HTTP endpoint.. So we use FastAPI for that.

The inference API has three key endpoints as shown below. /health and /ready are not optional, KServe uses them to manage pod lifecycle and traffic routing. /predict is the actual inference call.

💡 key Insight: Model Loading

The model loads at startup and stays in memory. Every request reuses the same in-memory object and it doesn't read model.pkl from disk on every call.

This is the same pattern used in production model serving systems. Models are loaded during service startup and reused for inference to ensure low latency and efficient performance.

The Frontend App (Flask)

The Flask app is the UI layer. It renders the HTML form, takes the HR person's input, POSTs it to the KServe inference endpoint, and displays the result.

It has no model logic inside it, just a form and an HTTP call call to the inference service.

If you have ever deployed a Node.js or Go web app on Kubernetes, this is no different. Flask handles routing and template rendering. The model prediction is just an HTTP call to another service. The same pattern as any microservice architecture.

What Is KServe and Why Not Just Use a Deployment?

Here is the key question every DevOps engineer asks.

You already know how to deploy a container on Kubernetes. Why not just write a Deployment + Service + Ingress and be done with it?

Well you could. But KServe gives you things that a basic Deployment doesn't.

KServe is a Kubernetes operator purpose-built for ML model serving. It handles operational concerns like scaling, traffic management, and observability.

Think of KServe as an Ingress Controller, but for ML models. Just like Nginx Ingress handles HTTP routing rules via Ingress objects, KServe handles model serving via InferenceService objects.

The following image illustrates how Kserve fits in to our workflow.

If you have worked with operators before like Prometheus Operator, Argo CD etc, KServe follows the same pattern. It installs a custom resource definition (CRD) into your cluster. You create an InferenceService object. The KServe controller watches for it and provisions the underlying Pods, Services, and routing rules automatically.

Now lets implement everything we learned so far.

Dockerize The Model

First, we need to containerize model.pkl with all the dependencies it requires for predictions like scikit-learn, numpy, and the predictor logic that loads and calls it

The Dockerfile is inside the phase-1-local-dev/inference folder. It bakes model.pkl directly into the image at build time. The image is the versioned model artifact (1.0.0).

For this local setup, we push the images to Docker Hub so our local Kubernetes cluster (kind/minikube) can pull it.

You can find the Dockerfile inside phase-1-local-dev/inference folder. Run the following commands from the phase-1-local-dev directory to build the image.

$ cd inference

$ docker build -t <your-dockerhub-username>/attrition-inference:1.0.0 .

$ docker push <your-dockerhub-username>/attrition-inference:1.0.0model.pkl is baked into the image at build time. The image is the versioned artifact. v1.0.0 always contains exactly the model trained in Step 3. So no surprises at runtime.

Dockerize The Frontend

Now, lets Dockerize the frontend.

You can find the frontend codes inside phase-1-local-dev/frontend folder. Run the following commands from the phase-1-local-dev folder to build the image.

$ cd frontend

$ docker build -t <your-dockerhub-username>/attrition-frontend:1.0.0 .

$ docker push <your-dockerhub-username>/attrition-frontend:1.0.0Deploy KServe

To quickly install Kserve on your cluster using Helm, run the following commands. Cert-manager is also mandatory for Kserve, so we will install that as well.

Note: If you get failed calling webhook "webhook.cert-manager.io” error during installation, restart the cert-manager-webhook deployment to force certificate regeneration. Uninstall kserve and reinstall kserve.

# Install cert-manager

$ kubectl apply -f https://github.com/cert-manager/cert-manager/releases/download/v1.19.0/cert-manager.yaml

# Install kserve in standard mode

$ helm install kserve-crd oci://ghcr.io/kserve/charts/kserve-crd --version v0.16.0 -n kserve --create-namespace

$ helm install kserve oci://ghcr.io/kserve/charts/kserve --version v0.16.0 --set kserve.controller.deploymentMode=Standard -n kserveDeploy The Kserve InferenceService

This is the only Kubernetes resource you need for the inference container. One YAML. The InferenceService CRD creates a Deployment, Service, HPA, and routing config.

You can find the manifest file to create an inference inside phase-1-local-dev/k8s/inference.yaml.

Note: If you are using your own Docker image, replace the image url accordingly. The image present in the manifest also works.

To deploy the inference API, run the following command from inside phase-1-local-dev/k8s directory.

$ cd k8s

$ kubectl apply -f inference.yaml

$ kubectl get deploy,svcAnd, you will see a pod up and running in the default namespace.

Also, it exposes the inference service at employee-attrition-predictor.default.svc.cluster. The frontend application uses this service endpoint internally using INFERENCE_URL environment variable to call the inference service.

Deploy the Frontend

Now lets deploy the frontend app.

The frontend is a standard Flask web app. It serves the HTML form, takes the HR person's input, calls the inference endpoint and shows the result. It has no knowledge of the model, just an HTTP URL it POSTs to.

It reads INFERENCE_URL from an environment variable at runtime. You set this in the Deployment manifest.

You can find the frontend deployment file inside phase-1-local-dev/k8s/deployment.yaml.

To deploy the inference, run the following command from inside phase-1-local-dev/k8s directory.

$ cd k8s

$ kubectl apply -f deployment.yaml

Now, if you list the pods, you can see an additional pod running in the default namespace.

It also creates a NodePort service on port 31010. So that we can access the UI.

Test the Deployments

To test the deployments, access the UI using the URL http://localhost:31010 as shown below.

Enter the values and click the Predict Attrition button. You will get the prediction values as follows.

Real World Context: What If the Model Is 15 GB?

Baking a 15 GB model into a Docker image and loading it entirely into memory is not realistic. A 15 GB image takes minutes to pull, burns memory on every pod replica, and makes scaling painful.

In production, large models are stored separately in a model registry or object storage (S3, GCS), and the container pulls just the model weights it needs at startup, not the entire file baked into the image. KServe supports this natively.

For very large models (LLMs, foundation models), you go further. Model sharding across multiple GPUs and dedicated serving runtimes like vLLM or Triton Inference Server instead of a plain FastAPI app.

For our employee attrition model, the file is a few KBs. Baking it into the image is perfectly fine and keeps things simple for Phase 1.

What’s Next?

Next, we move into Phase 2. This is where we dive into the tools and workflows used in real enterprise ML setups.

We need to look into concepts like dataset versioning, model retraining, experiment tracking, hyperparameter logging, and feature stores using tools like Kubeflow, MLFlow etc..

All the concepts we learned in Phase 1 will make those workflows much easier to understand. You will see how everything connects when the process becomes automated end to end.